How to Implement an Enterprise Context Layer: A Phased Guide for Real Data Estates

TL;DR

- Implementing an enterprise context layer is a progression along a four-stage maturity ladder, not a single project:

- Institutional knowledge (Stage 1, 16% of organizations)

- Harnessed metadata (Stage 2, 21%)

- A single pane of glass for humans and machines (Stage 3, 24%)

- Accurate context for AI agents (Stage 4, 39%)

- A working data catalog is not an alternative to a context layer. It puts an organization at Stage 2 or Stage 3, which means most of the infrastructure work is already done and the implementation question is how to advance to Stage 4, not how to start from scratch.

- The phased path is sequenced: Ingest metadata across the estate, establish ownership and quality, enrich the substrate with business meaning, expose it to consumers through APIs and MCP servers, then govern and refine in steady state. Each phase produces the substrate the next phase depends on.

- Pinterest‘s internal analytics agent became the most-used internal tool at the company within two months of launch, but the implementation timeline that mattered started in years before with substrate work on DataHub. There is no shortcut around the upstream infrastructure.

If you searched “how to implement an enterprise context layer,” you have probably already read the standard answer: stand up a vector database, pick an embedding model, layer in retrieval-augmented generation, filter out irrelevant context, optimize the context window, expose it through an MCP server. None of that is wrong. None of it is a plan, either. It’s a list of techniques that operate on context, not a sequence for producing context in the first place.

We treat implementation as an infrastructure question first and an AI question second. And you are probably further along than you think. If your organization runs a data catalog, has lineage across its core systems, or has anything resembling a working business glossary, you are not starting from scratch. You are somewhere on a maturity curve, and the implementation question is where you are on that curve and what it takes to advance.

What you are actually implementing—what is an enterprise context layer?

Quick definition: What is an enterprise context layer?

An enterprise context layer is the governed interface that exposes business meaning, ownership, lineage, quality, and policy from across the data estate to the humans and AI agents that need to reason over it.

Before walking into the how, the what needs to hold still. An enterprise context layer is the governed, queryable interface between your data estate and the systems, both human and agentic, that need to reason over it. It exposes business meaning, ownership, lineage, quality, and policy in a form that AI systems can consume reliably and that humans can audit.

A context layer is not a vector database. It is not a knowledge graph. It is not an MCP server. Those are components or interfaces. The layer itself is the substrate they draw from, plus the governance that makes the substrate trustworthy. Conflating the layer with any single component is the first place implementations go sideways, because it lets teams convince themselves they have implemented something when they have only deployed a tool.

The job of the implementation is to produce context that is relevant to the question being asked, reliable enough to act on, and retained over time, so the layer accumulates institutional memory rather than starting fresh every session.

Context management is also distinct from context engineering. Context engineering is the practice of building prompts and retrieval pipelines for individual AI applications. Context management is the enterprise-wide capability those engineered pipelines draw from, and it is what this piece is about implementing.

- For a fuller treatment of what a context layer does and where it sits in an AI stack, see our piece on the context layer for AI.

- For the distinction from a semantic layer, see context layer vs semantic layer.

The four stages of context management evolution

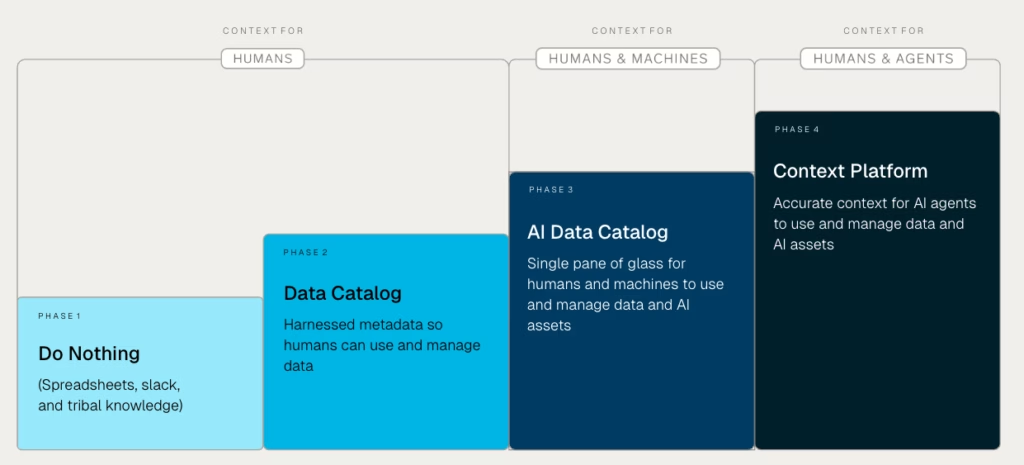

Implementing an enterprise context layer is not a binary state. It is a progression, and DataHub’s recent State of Context Management Report 2026 maps it as a four-stage ladder. Most organizations are already somewhere on the ladder, not starting from zero.

The stages are:

- Stage 1: Institutional knowledge. Context lives in spreadsheets, Slack threads, Teams channels, and the heads of senior engineers. There is no system of record.

- Stage 2: Harnessed metadata. Metadata is captured and made available so humans can find and manage data. This is the data catalog era.

- Stage 3: A single pane of glass for humans and machines. Metadata is unified, governed, and accessible to both human users and the machines that need to operate on data and AI assets. The same governed view serves an analyst opening a dashboard and a service querying the catalog through an API.

- Stage 4: Accurate context for AI agents. The context layer is reliable enough for autonomous agents to use and manage data and AI assets at enterprise scale. Definitions, lineage, ownership, quality, and policy are all queryable, current, and trusted.

Perception vs reality: why self-assessment inflates the ladder

When the State of Context Management Report 2026 asked 250 IT and data leaders to place their organization on this ladder, 39% self-reported at Stage 4. Another 24% claimed Stage 3. On the self-assessment alone, 63% of organizations are operating at maturity levels where AI agents should be reading from a trusted context layer in production.

The operational evidence tells a different story. The same respondents reported that 87% cite data readiness as a significant impediment to putting AI into production, 61% frequently delay AI initiatives due to a lack of trusted and reliable data, and 66% report AI models generating biased or misleading insights because of low infrastructure maturity.

These numbers do not coexist with a Stage 4 population. Organizations with governed, agent-ready context do not delay AI for lack of trusted data, because that is the problem Stage 4 is supposed to have solved. The gap between what leaders report and what their teams experience is the most useful signal in the report, because it tells you that most organizations are probably one or two stages below where they would place themselves.

This is the part that matters for an implementation plan: If you self-assess at Stage 4 but your teams are losing time to data discovery, your AI pilots are stalling at the trust boundary, and your agents produce plausible but wrong answers, you are probably at Stage 2 or Stage 3, not Stage 4.

The honest starting point is the one that makes the rest of the plan work. Vendors pitching 60-day context layer rollouts are selling the Stage 4 exposure layer over a foundation their customer has not built yet, and the timeline collapses the moment that becomes visible.

How to figure out where you actually are

Match the telltale signs to the stage that fits, then jump to the phase of the implementation path where your work starts. Everything before that phase is work you have already done.

Here’s the final HTML with all links in place: “`html| Stage | Telltale signs | Start at |

| Stage 1 | Context lives in spreadsheets, Slack, and senior engineers’ heads. No catalog, no cross-system lineage. New hires learn data by asking people. | Phase 1: Inventory and unify metadata |

| Stage 2 | A working data catalog exists. Lineage is in place across core systems. Ownership is assigned for production assets. The catalog is still a human-facing tool. | Phase 2: Establish ownership, contracts, and quality |

| Stage 3 | Governed metadata is exposed to both humans and machines through APIs, SDKs, or equivalents. At least one downstream service is consuming it programmatically. | Phase 4: Expose the context layer to consumers |

| Stage 4 | AI agents are reading from the layer in production. A funded team maintains it. Feedback from agents and users is actively improving accuracy. | Phase 5: Govern, refine, and close the loop |

A phased path from your current stage to a Stage 4 context layer

The phases below map onto the stage ladder:

- Phase 1 is the work that gets a Stage 1 organization to Stage 2

- Phases 2 and 3 are the work that takes a Stage 2 organization to Stage 3

- Phases 4 and 5 are the work that takes a Stage 3 organization to Stage 4

They are sequenced, but they are not a fresh five-step project for a blank slate. Use the telltale signs table above to find your starting phase, and treat everything before it as already done.

Phase 1: Integration: inventory and unify metadata across the estate

Connect the systems that already hold metadata: warehouses, lakes, BI tools, dbt or your transformation framework of choice, orchestration, existing catalogs. The value of this phase is breadth: the more sources the platform can ingest natively, the less custom connector work the implementation team takes on.

As a baseline, a serious metadata platform should support at least 100 pre-built connectors covering warehouses, lakes, BI, transformation, orchestration, and adjacent systems like CRMs and knowledge bases. The output is a unified metadata graph, not a context layer yet. You are after a single queryable surface for what data exists, where it lives, and how it flows.

The exit signal is straightforward: a person, or a process, can ask “show me everything downstream of this table” and get an answer that holds across systems.

Phase 2: Quality: establish ownership, contracts, and quality signals

Context without ownership is trivia. This phase assigns domains and named owners, instruments quality and freshness checks, and starts capturing usage. Where it makes sense, formalize the producer-consumer relationship through data contracts so changes upstream cannot silently break the meaning downstream.

The exit signal is that every asset you intend to expose to an agent has an owner, a quality state, and a known set of consumers. Implementations stall here more than anywhere else, because ownership is a social problem disguised as a metadata one.

Phase 3: Curation: enrich the substrate with business meaning

Glossary terms, classifications, descriptions, tier labels, sensitive-data tagging, lineage-propagated semantics. This is where the metadata graph becomes a context graph. AI-assisted enrichment is appropriate here, and increasingly necessary at scale, but the pattern that holds up in production is AI-drafted, expert-reviewed, not auto-published.

The exit signal is that an analyst or an agent landing on a key asset can read what it means, who owns it, where it came from, and how it relates to the metrics that depend on it.

Phase 4: Activation: expose the context layer to consumers

APIs, SDKs, MCP servers, native integrations into the tools agents and analysts already use. This is the phase most people picture when they say “context layer,” and it is short if phases one through three are done.

It is also the phase Atlan’s “Connect, Bootstrap, Certify, Activate” pitch is built around. Their timelines hold when the substrate, the ownership model, and the consumers on the other end are already in place. No tool creates those preconditions, which is why the 60-to-90-day claim is really a statement about customer readiness dressed up as a statement about product capability.

For a complement to this phase from the consumer side, see context engineering vs prompt engineering.

Phase 5: Governance: refine, govern, and close the loop

Feedback from agents and analysts feeds back into ownership, glossary, and quality. Decisions get logged. Stale definitions get flagged. New assets enter the substrate and inherit governance from day one. Over time, this is how the context layer becomes institutional memory rather than a snapshot. There is no exit signal for this phase. It is steady state, and it is the phase that determines whether the context layer keeps working in year two.

Where implementations stall

The failure modes are consistent enough to be worth naming.

- Starting with the agent instead of the substrate: The prototype works because the prototype only has to answer five rehearsed questions. Production has to answer the sixth.

- Treating the layer as a one-time project: No funded ownership of refresh, no feedback loop, no plan for what happens when the data estate changes. Context definitions go stale, accuracy decays in months, and trust is much harder to rebuild than to lose.

- Over-modeling the ontology before any consumer exists: Six quarters spent on a perfect schema for an agent that never ships. The right move is to model the slice the first consumer needs, ship it, and let real usage drive the next slice.

- Skipping ownership: You can have lineage and a glossary and no owners. The layer will work until the first time something is contested, and then it will not.

- Buying the exposure layer without the substrate: The 60-day deployment claim is real, for the customers who already did the substrate work. For everyone else, it is a 60-day exposure project bolted onto a missing foundation.

- Bolting security on after exposure: Access controls have to be enforced at the context layer’s retrieval interface, before any context reaches the agent. Filtering responses downstream is leaky and audit-hostile, and it makes the layer’s permissions impossible to reason about as soon as more than one agent is consuming it. The context layer is the natural control point for authentication, authorization, and audit logging; treating it as one is the difference between a layer you can govern and a layer you have to defend.

What a finished implementation looks like in production

Pinterest is the cleanest public example, and the lesson is about timeline.

Pinterest’s internal analytics agent became the most-used internal tool at the company within two months of launch, with roughly 10x the usage of the next most-used agent and coverage across about 40% of their analyst population. The model is impressive. The model is also not the implementation.

The implementation started years earlier. Pinterest had built governed table tiering across more than 100,000 tables, propagated business glossary terms across 40%+ of columns through join-based lineage, and reverse-mapped query history into semantic descriptions of analytical intent, all on DataHub as the system of record. When the analytics agent shipped, it was reading from a substrate that had been under construction for years. The agent was the visible part of a project that had mostly already happened.

The lesson is not “build like Pinterest.” The lesson is that when an implementation looks fast from the outside, the substrate work is somewhere else in the timeline. There is no shortcut. The only question is whether the work is happening before the agent ships, or after it fails.

Implementing an enterprise context layer is an exposure problem over infrastructure that should already exist, not a greenfield enterprise AI build. The four stages of context management evolution make that concrete: most organizations are already at Stage 2 or Stage 3, which means the implementation question is how to advance to Stage 4 from where you are, not whether to start from zero.

If you have a working data catalog, you are not behind. You are halfway there, and the next stage is the work in front of you.

Future-proof your data catalog

DataHub transforms enterprise metadata management with AI-powered discovery, intelligent observability, and automated governance.

Explore DataHub Cloud

Take a self-guided product tour to see DataHub Cloud in action.

Join the DataHub open source community

Join our 14,000+ community members to collaborate with the data practitioners who are shaping the future of data and AI.

FAQs

Recommended Next Reads