What Is a Context Catalog? Why Data Catalogs Aren’t Enough for the AI Era

Quick definition: What is a context catalog?

A context catalog is a governed metadata layer that makes enterprise knowledge about data assets discoverable and usable by both humans and AI agents. Unlike traditional data catalogs built for human search, a context catalog unifies technical metadata, business definitions, documentation, and policies into a continuously updated graph that can be accessed programmatically through APIs, MCP servers, and SDKs.

If you searched for “context catalog” and landed on a page about data catalogs, that’s not a coincidence. The term barely exists in most vendors’ vocabularies yet. But the gap itself is the point. Data catalogs were built for a world where humans searched for tables. That world is being overtaken by one where AI agents need to retrieve, evaluate, and act on enterprise context without a human in the loop. The distance between those two worlds is the distance between a data catalog and a context catalog.

Why the term “context catalog” is showing up now

AI agents are the forcing function. An analyst browsing a catalog UI can read a description, check a lineage diagram, and make a judgment call about whether a dataset is trustworthy. An AI agent can’t do any of that. It needs metadata that’s machine-readable, semantically structured, and accessible through programmatic interfaces.

At the same time, context engineering is formalizing as a discipline. As organizations move from prompt engineering (crafting inputs to a single LLM call) to context engineering (curating the full knowledge environment an AI system operates in), the question of where enterprise context lives and how it gets delivered becomes unavoidable.

Context windows compound the pressure. Every token passed into an LLM’s context window has a cost, and what gets included determines output quality. Organizations need a system that can surface the most relevant, trustworthy metadata on demand, not a static repository that dumps everything and hopes the model sorts it out.

Meanwhile, the tools that most organizations already have aren’t keeping up. Even modern data catalogs are built around a search-and-browse paradigm: a human types a keyword, scans a results list, clicks into a dataset, reads the description, checks the lineage diagram. That workflow assumes a person in the loop at every step. When an AI agent needs to determine whether a dataset is trustworthy, compliant, and appropriate for a task, it can’t follow that workflow. It needs structured, governed context delivered through an interface it can parse.

Regulatory pressure is adding urgency. Frameworks like GDPR and the EU AI Act require column-level visibility into how data flows through AI systems, the kind of traceability that static catalogs weren’t designed to provide.

The market is responding. According to our State of Context Management Report 2026, 88% of organizations have formally included context management architecture in their AI strategy, and 89% are likely to invest in context management infrastructure within the next 12 months. The catalog layer is where much of that investment will land first.

Context catalog vs. data catalog: What actually changed

The difference isn’t cosmetic. Data catalogs and context catalogs serve fundamentally different audiences, and that difference shapes everything from the metadata model to the access pattern.

- A data catalog was designed for human data consumers. It optimizes for search, browse, and manual documentation. Metadata is typically descriptive (table names, column types, ownership tags) and updated through periodic ingestion jobs. The value of a data catalog is directly tied to whether technical and business users adopt it, and adoption has historically been the biggest challenge.

- A context catalog is designed for both human and machine consumers. It optimizes for programmatic access, semantic richness, and governed delivery. Metadata isn’t just descriptive but relational: business context, quality signals, lineage, policies, and documentation are all connected in a graph that AI agents can traverse. Updates are event-driven, so the catalog always reflects operational reality rather than a stale snapshot.

| Data catalog | Context catalog | |

| Primary audience | Human data consumers | Humans and AI agents |

| Asset scope | Tables, views, dashboards, pipelines | Data assets + AI/ML models, features, training datasets |

| Metadata model | Descriptive (schema, tags, descriptions) | Semantic and graph-based (relationships, business meaning, policies) |

| Update mechanism | Periodic batch ingestion | Event-driven |

| Access pattern | UI search and browse | API, MCP servers, SDKs, plus UI |

| Governance scope | Data access policies | Data + AI agent policies |

| Value driver | Team adoption | AI system reliability and output quality |

This isn’t an abstract distinction. Two of these differences deserve particular attention:

- The update mechanism matters more than it appears. A data catalog that ingests metadata on a nightly batch schedule is fine for a human checking a dataset’s lineage on Tuesday morning. It’s not fine for an AI agent making a decision at 2 a.m. based on metadata that may have been stale for hours. Event-driven sync is a requirement, not a nice-to-have.

- Governance scope expands significantly. Data catalogs govern who can access which data. Context catalogs also need to govern which AI agents can access which context, what actions those agents can take based on the context they retrieve, and whether the context itself meets quality and compliance thresholds before it’s served, including controls over sensitive data. This is a fundamentally different governance surface.

When 57% of organizations are duplicating AI efforts across departments due to the lack of a comprehensive, unified context graph (State of Context Management Report 2026) and teams can’t even agree on what data exists across the organization, the absence of a shared, machine-readable context layer has direct operational costs.

What a context catalog actually needs to do

Defining the concept is one thing. Building one that works requires getting three things right.

1. Unify technical metadata and business knowledge

Most data catalogs stop at technical metadata for traditional data assets: schemas, column types, lineage. They rarely extend to AI and ML assets like models, feature stores, or training datasets. A context catalog needs to go further. It has to connect technical metadata with business definitions, data quality signals, governance policies, runbooks, and documentation from tools like Confluence, Notion, and internal wikis.

This is the difference between telling an AI agent that a column is called rev_q3_adj and telling it that the column represents adjusted quarterly revenue, is owned by the finance team, was last validated two hours ago, and is subject to SOX compliance requirements. The second version is context. The first is just a label.

DataHub Cloud’s unified context platform does this by automatically connecting metadata from 100+ data sources (Snowflake, Databricks, dbt, Looker, and others) with documentation from Notion and Confluence. One graph links tables, dashboards, docs, glossary terms, and domains. Context Documents let teams create runbooks, FAQs, policies, and definitions directly in DataHub and link them to the relevant data assets so that context stays connected rather than buried in wikis.

2. Stay current without manual effort

The failure mode of legacy catalogs is staleness. If the catalog doesn’t reflect reality, nobody trusts it. And if nobody trusts it, nobody uses it. AI agents make this problem worse because they’ll act on stale metadata without questioning it.

Event-driven architecture is the fix. Rather than running batch ingestion on a schedule and hoping nothing changed between runs, an event-driven system captures metadata changes in near real-time. DataHub’s architecture is built on this principle: metadata syncs regularly from connected systems so the context graph reflects operational reality.

3. Serve AI agents as a first-class consumer

This is where most existing catalogs fall short. They were built with a UI-first mindset, and programmatic access was bolted on later. A context catalog needs to treat AI agents as first-class consumers from day one.

That means exposing the context graph through MCP servers so that tools like Claude, Cursor, and Windsurf can search and act on trusted enterprise context directly. It means providing SDKs and integrations (DataHub’s Agent Context Kit works with Snowflake Cortex, LangChain, and Google ADK) so teams can build custom agents with full access to organizational knowledge.

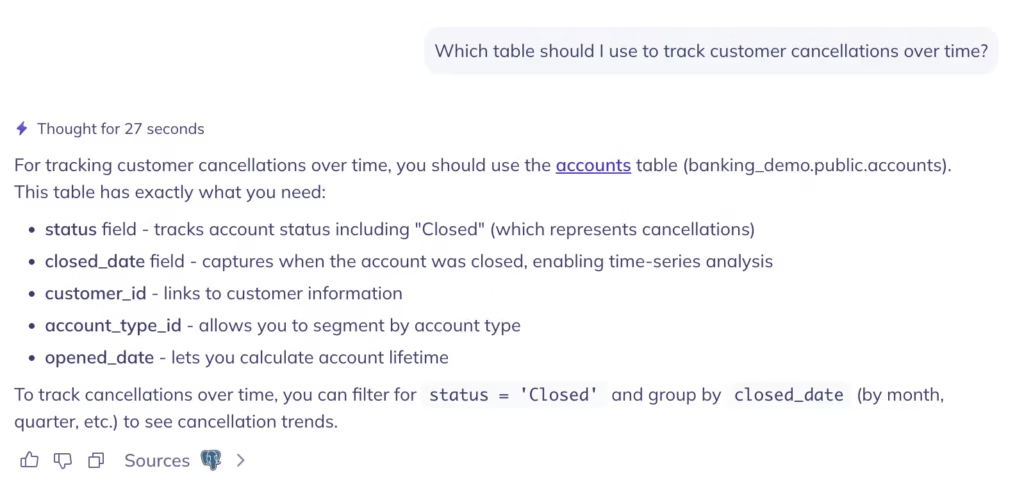

And it means offering natural language discovery through tools like Ask DataHub, which lets anyone find data, trace lineage, debug issues, and generate SQL using plain language, whether they’re in the DataHub UI, Slack, or Teams.

Ask DataHub has genuinely shifted how our people discover and understand data. Instead of needing to know the exact table name or the ‘right’ terminology, anyone can just describe what they’re looking for in plain language and get pointed to the right assets.

— Lynne C., Head of Data Enablement, Xero

Why a context catalog alone isn’t enough

Here’s where the conversation usually stops: define the context catalog, explain how it differs from a data catalog, describe the features. But a context catalog is the system of record for enterprise context, not the entire system.

Delivering context reliably at enterprise scale requires infrastructure around the catalog. You need:

- Governance to control who and what can access which context (not just data access policies but AI agent policies)

- Observability to know whether the context is accurate, fresh, and complete

- Lineage to trace where context came from and understand downstream impact when it changes

- Agent management to track which AI agents are consuming which context, monitor their behavior, and enforce policies across an increasingly autonomous ecosystem.

This is the difference between a catalog-centric approach and a platform-centric approach. A catalog-centric approach treats the context catalog as the destination. A platform-centric approach treats it as one layer within a broader context management capability.

The data supports the platform approach. According to the State of Context Management Report 2026, 93% of organizations are likely to treat context as shared infrastructure rather than team-specific tooling. And 53% frequently experience AI-related compliance issues caused by lack of data provenance, a problem that cataloging alone can’t solve without governance and lineage around it.

What leading teams are doing with context catalogs today

The organizations moving fastest aren’t waiting for the category to formalize. Over 3,000 organizations already use DataHub’s open-source data catalog to manage their metadata, and the pattern among the most mature is consistent.

- Netflix reimagined discovery and governance at scale with DataHub, giving teams a unified view across their entire data landscape and simultaneously laying the groundwork for agentic AI.

- Pinterest operationalized data governance with DataHub, pruning 400,000 tables down to 100,000 — and then put that clean, governed foundation to work as the central context platform behind its most-used internal AI agent.

- Apple uses DataHub with custom entities and connectors to manage machine learning metadata across its ML platform, tracking the full lifecycle from training data to production AI features.

These teams didn’t start by building a context catalog from scratch. They started with the catalog layer (unified metadata, programmatic access, governed discovery) and expanded into the broader platform capabilities (observability, lineage, agent management) as their AI initiatives matured. The context catalog was the foundation, not the ceiling.

For teams evaluating their own readiness, the question isn’t whether you need a context catalog. If you’re running AI agents at any scale, you do. The question is whether your current catalog can serve machine consumers as effectively as it serves human ones. If the answer is no, the gap between those two worlds is where your context management strategy begins.

Future-proof your data catalog

DataHub transforms enterprise metadata management with AI-powered discovery, intelligent observability, and automated governance.

Explore DataHub Cloud

Take a self-guided product tour to see DataHub Cloud in action.

Join the DataHub open source community

Join our 14,000+ community members to collaborate with the data practitioners who are shaping the future of data and AI.

FAQs

Recommended Next Reads