Context Management for Data Analysts

What Comes After AI Writes the SQL

TL;DR

The data analyst role is evolving from purely querying data to curating the business context that makes data trustworthy for both humans and AI agents. Translating business questions into SQL (the skill that built the profession) is being commoditized by LLMs, and context management is the new point of leverage.

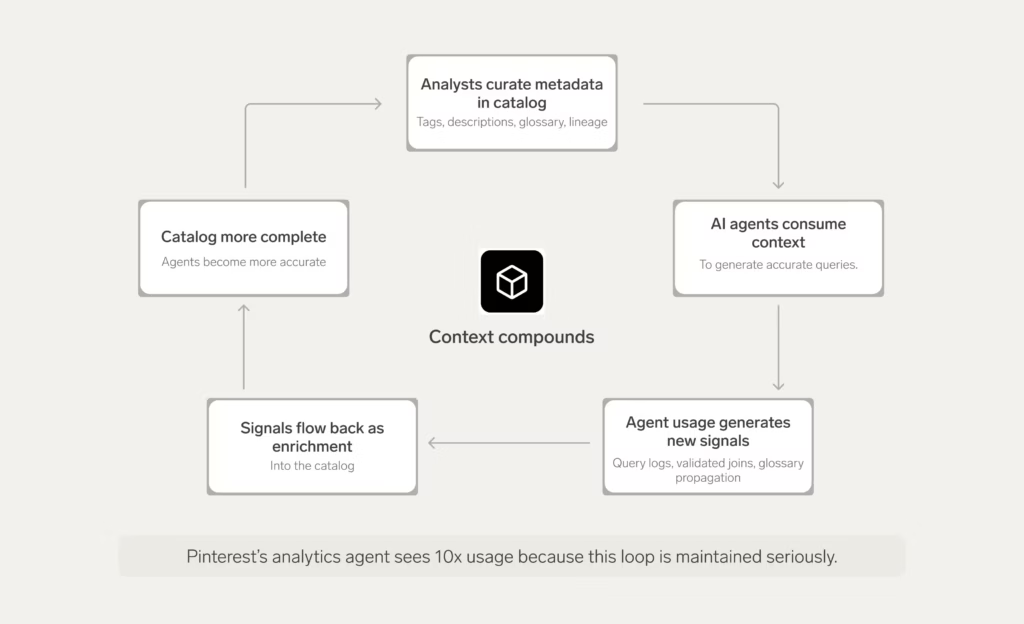

Organizations that treat their data catalog as a metadata warehouse, a structured store of everything an agent needs to produce trustworthy results, are seeing dramatically better AI outcomes. Pinterest’s analytics agent sees 10x usage of any other internal tool because the context layer behind it is maintained seriously.

Context management is not a departure from what strong analysts already do. It is a reorientation of where the value lies, from writing queries to governing the environment that makes AI-generated queries accurate.

A quote from my friend Britton Stamper stopped me mid-scroll recently:

“The gap that separated business questions from working queries is closing fast now that LLMs can write decent SQL. It’s happening fast enough that all my data analyst friends are feeling an urgent need to evolve their skills and uplevel their toolkits in order to stay relevant in the AI era.” — Britton Stamper, Push.ai

Gartner predicts that organizations will abandon 60% of AI projects in 2026 due to a lack of AI-ready data, and we see that the bottleneck is quickly shifting from hiring human beings who can write SQL to something else entirely. LLMs need human beings to impart their understanding of the data environment into a metadata environment structured around their businesses in order to write correct SQL to accurately answer business questions.

That “something else” is context management.

What happens now that AI can write SQL?

LLMs can now write decent SQL, but only when they have access to the metadata that tells them how the business actually works. A model needs to know which tables are authoritative, how metrics are defined, and how data flows across systems before it can answer a business question correctly. That kind of context lives in metric specifications, semantic layers, lineage graphs, ownership records, and business glossaries. None of it sits inside the warehouse, and all of it has to be built and maintained by humans.

dbt Labs ran fresh benchmarks in 2026 that show this dynamic clearly: text-to-SQL backed by a structured semantic layer approached near-perfect accuracy, while the same models running against unmodeled tables performed dramatically worse.

The instinct most data people have when confronted with a situation like your tech skills becoming less scarce/valuable is to act like an ostrich and bury their head into the sand.

They’ll say things like “I understand this business in ways AI does not. I know that the Q3 numbers look weird because of a migration. I know that ‘revenue’ in the CRM is defined differently than ‘revenue’ in the finance system. No LLM will ever understand what I understand so I’m going to be fine.”

There is a parallel here to when Microsoft Excel launched in the mid-1980s. There were worries about fewer junior roles being hired to do manual calculations, and people further along in their careers needing to learn all new software. But Excel actually ended up increasing the demand for analysts who could learn it and use it effectively. AI might feel a bit scarier for the short term because it does more of the human-like thinking, but ultimately, both tools can be productivity multipliers for data analysts.

Here’s the comfort in that parallel: the models are getting better at writing SQL, but they cannot invent the business context that makes the SQL correct. That context is exactly what data analysts are best positioned to build, and building it is becoming the most important work in the profession.

The new responsibility taking shape: Context management

What is context management?

Context management is the ongoing discipline of building and maintaining the metadata layer that AI agents need to reason accurately about business data. It includes defining authoritative metrics, governing business terminology, maintaining data lineage and quality signals, and connecting unstructured institutional knowledge to structured data assets. Where context engineering is a systems-level discipline focused on infrastructure (see The Data Engineer’s Guide to Context Engineering), context management is the operational practice data analysts perform day to day to keep that infrastructure accurate, current, and trustworthy.

A new responsibility for data analysts is beginning to take shape in the organizations that are furthest along in deploying AI to production data workflows around context management. It does not yet have a catchy name like “ETL” or “Data Viz” quite yet, though Gartner did name context engineering a strategic priority. A few formal job postings are beginning to appear that mention it as well.

Context management is about creating the metadata layer that sits between raw data and AI-generated output. Data analysts are no longer just tasked with writing the queries that answer business questions; they now also have to build the environment in which AI agents can reason about the data ecosystem and write queries themselves.

An AI agent reasoning about business data needs more than a schema:

- It needs to know which tables are authoritative and which are deprecated

- It needs to understand that “active user” means different things across different product surfaces

- It needs to know that last week’s engagement drop was caused by a monitoring incident, not a real behavior change

- It needs column-level lineage, ownership records, quality assertions, business definitions, and the semantic relationships between entities

None of that is in the warehouse. All of it has to be built, maintained, and made accessible.

What context management adds to the data analyst job description

The work of context management in a metadata warehouse is not a departure from what strong data practitioners already do. It is a reorientation of where the value lies and the tooling that makes it easier to sustain.

In practice, that reorientation is showing up in four areas of the analyst’s day-to-day work:

1. Metric architecture

Defining authoritative business metrics and making those definitions machine-readable is central to the work. When an AI agent generates a query about retention, it needs to know exactly what retention means in this organization, what filters apply, what tables are authoritative, and what the correct business logic is. That definition needs to exist in a system the agent can access, not in a document somewhere.

In practice, that machine-readable definition looks something like this:

| Business term | Definition | Owner | Associated data asset |

| Net revenue | Total sales minus returns | Finance team | prod_sales.net_amt |

| Active user | Logged in within 30 days | Product team | user_logs.last_login |

The table is illustrative, but the principle is the point: every business term an analyst or agent might query needs to map to a specific column or transformation, with a named owner and a clear definition. Anything less, and the agent is guessing.

2. Semantic modeling

Building the abstraction between raw warehouse tables and the questions the business actually asks. This goes beyond transformation logic. It means capturing how KPIs relate to each other, how business entities connect across systems, and what conditions govern accurate results.

3. Context enrichment and feedback loops

Structured data represents only part of the picture. The full context of why a metric moved often lives in Slack messages, incident reports, product changelogs, and CRM notes.

A context manager designs systems that connect unstructured context to structured data and (critically) captures analyst behavior as signal. Every query pattern, every reused join, every validated golden query is evidence about how the business works. Building systems that learn from that evidence is part of the job.

4. Trust and governance

An AI agent reasoning over stale or incorrect metadata does not fail loudly. It produces answers that are subtly wrong in ways that are hard to detect. Maintaining the accuracy and currency of the context layer (including data quality assertions, ownership records, and lineage documentation) is an ongoing operational responsibility, not a one-time setup task.

What context management looks like at Pinterest

Pinterest’s engineering team described this problem precisely in a recent architecture post.

A new analyst joining a data team, they observed, cannot accurately answer mission-critical business questions on day one. They spend weeks reading old Slack threads, reverse-engineering queries from BI dashboards, and building a mental model of which tables to trust and what terms actually mean across the organization. An AI agent faces exactly the same problem — it starts with an empty context window and needs access to the same institutional knowledge, or it will produce answers that are structurally correct and substantively wrong.

Pinterest’s solution was to build a structured semantic layer of sorts. It wasn’t just designed to create facts, dimensions, and metrics. It is actually a governed store of metadata about what their data means, not just where it lives, but a marrying of technical and business context. The result is an analytics agent that now sees ten times the usage of any other internal tool at the company because it can give trustworthy results.

What Pinterest also demonstrated is that this layer can improve over time through deliberate design. Their team analyzed historical query logs and automatically propagated glossary terms across more than 40% of their columns without manual tagging. They used an LLM to reverse-map historical queries into semantic descriptions of the business questions those queries were designed to answer—effectively turning every query ever run by an analyst into a signal that makes the next analyst, or agent, more accurate.

The catalog enriches the agent; the agent’s usage enriches the catalog. The result is an infrastructure that compounds in value rather than decaying and you don’t saddle the data analyst with toilsome work of keeping the catalog up to date manually. For a deeper analysis of how Pinterest’s approach maps to a modern context platform, my colleague John Joyce wrote a companion piece on the DataHub blog.

Why context management needs real infrastructure

The framing that positions AI as a threat to the data analyst misidentifies where the real change is happening. The query authoring is being automated by AI, but the judgment about what should be measured, how it should be defined, and whether the infrastructure that supports AI is the new point of leverage.

Context management makes enterprise AI reliable at scale and I argue that it is a more consequential job than writing queries. The organizations that recognize this transition early (and invest in developing context tooling and uplevelling their people to use them well) will be the ones whose AI investments actually deliver what was promised.

Here’s the part most teams underestimate: managing enterprise context at this scale is not something a person, or even a team, can sustain by hand. You’re looking at tens of thousands of tables, hundreds of contributors, business logic that changes every quarter, and a rate of metadata churn that defeats spreadsheets and wiki pages within a week. The metadata layer has to be real infrastructure—a system that captures context automatically, exposes it to humans and agents through APIs and tool calls, and learns from how it’s being used.

That’s the work we’ve been building DataHub to do.

“Before implementing DataHub, we received a lot of different inquiries about data… Since implementing DataHub, we’ve mostly found that we don’t experience this challenge anymore. A lot of these ad hoc inquiries are down to maybe one at most per day.” – Nathan Siao, Data Analyst, HashiCorp

The flywheel Pinterest spent years assembling (query history mined for patterns, glossary terms propagated across the data estate, golden queries surfaced as ground truth, lineage and governance signals that tell agents which sources to trust) are the primitives DataHub provides without requiring you to engineer them yourself.

Most organizations don’t have Pinterest’s runway. They shouldn’t need it.

If you’re feeling the shift I’ve described in this post and want to see what context management looks like on infrastructure built for it, come talk to us.

Future-proof your data catalog

DataHub transforms enterprise metadata management with AI-powered discovery, intelligent observability, and automated governance.

Explore DataHub Cloud

Join a live group demo to see DataHub Cloud in action.

Join the DataHub open source community

Join our 15,000+ community members to collaborate with the data practitioners who are shaping the future of data and AI.

FAQs

Recommended Next Reads