AI Agent Onboarding: The Missing Discipline Behind Agents That Actually Work

TL;DR

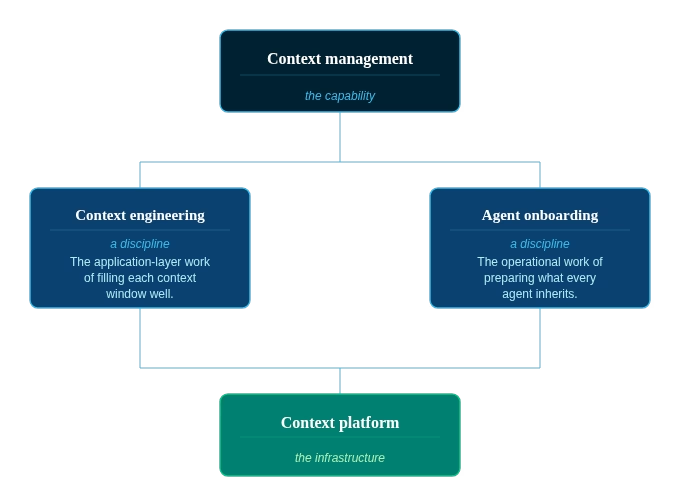

- AI agent onboarding is the discipline of preparing the organizational context an agent inherits the moment it comes online, so it has access to trustworthy, current, and governed information from its first query.

- It’s distinct from context engineering, which curates what goes into a model’s context window. Onboarding prepares the upstream knowledge, trust signals, and feedback loops the new agent draws from.

- Agent onboarding is only possible when context management creates shared infrastructure: a context platform that every agent and team draws from, not a context layer each team rebuilds independently.

The industry has spent two years on context engineering: Token windows, prompt design, retrieval pipelines, all real work, all worth doing. What almost no one is talking about is the upstream problem that makes the engineering matter in the first place.

Most AI agents in production today have credentials and system access on day one and no context. They’ve been handed the equivalent of a badge and a laptop. They can technically do things. They have no idea which things to trust, which definitions are canonical, or which pipelines were broken last Tuesday. So they do what any new hire without onboarding would do.

They confidently pull from whatever they find first, and sometimes they get it wrong.

The missing piece has a name: Agent onboarding. It’s the operational discipline that determines whether the context an agent inherits is trustworthy, current, and governed, and it’s only possible when context management is treated as shared infrastructure rather than something each team rebuilds. It decides whether the agents you deploy actually work in production or quietly burn down your team’s trust.

Context engineering (and what it can’t do)

Context engineering is real, and the people doing it well are doing serious work. The discipline covers the work of filling the context window well: system prompts, retrieved documents, tool definitions, structured outputs, conversation history, and memory. Done well, it’s the difference between an agent that responds usefully and one that wanders.

The boundary of context engineering is what it can’t reach. It works on the context it’s handed. It can’t tell you whether that context is current, whether the dataset it’s pulling from is the canonical source, whether the metric definition came from the team that owns it or the team that disagreed with the team that owns it. Those questions sit upstream of the window.

The context that you sourced, that you thought was good enough, it could be completely missing the nuance of the business.

That’s the gap. Context engineering is application work. Agent onboarding is what happens when an agent lands in an organization that’s doing the platform work, which the industry calls context management. One can’t substitute for the other, and treating them as the same thing is how organizations end up with agents that pass demos and fail in production.

What agent onboarding actually means

Quick definition: What is AI agent onboarding?

The discipline of preparing the organizational context an agent inherits the moment it comes online, so whatever it sees is trustworthy, current, and governed. Where context engineering curates what goes into the context window, agent onboarding prepares the upstream knowledge that fills it.

Three components do the actual work. None of them are new ideas. What’s new is the recognition that agents need them in the same way new employees do, attached to the assets the agent will encounter, available the moment it comes online.

1. Capturing institutional knowledge

Most of what an agent needs to know was never written down. It lives:

- In the head of the analyst who’s been on the team for six years and remembers why the second customer_id column exists

- In a Slack thread from last quarter where the marketing team and the finance team finally agreed on what counts as an active user

- In the muscle memory of the data engineer who knows that the prod_events table is canonical and the three other tables with similar names are deprecated, even though none of them are flagged that way.

A new hire learns this through proximity. They sit next to people, they ask questions, and over months they build a working map of the organization. Agents don’t get months. They get the first query.

The job of capturing institutional knowledge is the job of externalizing this, in pieces small enough to be useful, attached to the assets the agent will actually encounter. Not buried in a wiki nobody updates. Not dumped into 40-page onboarding documents the agent has to parse on every call.

This last point matters more than people give it credit for. The most useful thing we learned when building our own internal agents wasn’t about model quality. It was that long-form documentation is a terrible teacher. Agents learn the way new hires do, through accumulated small corrections from the people around them, not by reading the employee handbook front to back. The context graph should get richer every time someone corrects an agent, and the next agent should inherit the correction without anyone having to file a ticket about it.

In DataHub, this is what Context Documents are for: runbooks, FAQs, business definitions, and institutional knowledge linked directly to the data assets, glossary terms, and domains they apply to. Knowledge attached where agents will encounter it, not stored where agents have to go looking for it.

2. Establishing trust signals

A new hire learns quickly which colleagues to trust on which topics, which dashboards are current, which spreadsheet is the actual source of truth, and which four are stale copies someone forgot to delete. That intuition is what separates a useful contributor from a confident one. Agents need the same intuition, and the only way they get it is if the signals are encoded in the metadata.

When an agent queries a dataset and gets back ownership, freshness, quality score, downstream consumer count, and deprecation status alongside the data itself, the reasoning changes. Suddenly, the agent knows whether to use the dataset, when to defer, and when to flag uncertainty rather than generating an answer. Last refreshed two hours ago, owned by the data engineering team, 0.95 quality score, twelve downstream consumers, flagged as deprecated for analytics use after Q2 2025: that’s not noise around the data, it’s the context the agent needs to do its job responsibly.

Without trust signals, agents default to confidence. They have to. They have no other mode. Adding the signals is what gives them the ability to be appropriately uncertain, which is what most production use cases actually require. The same metadata layer that delivers trust signals also enforces access controls. Onboarding an agent doesn’t bypass governance; it makes governance visible to the agent so it can respect the same policies a human analyst would.

3. Building the feedback loop

Onboarding doesn’t end on day one for human employees and it doesn’t end on day one for agents either. The data landscape moves. Pipelines break, schemas evolve, definitions get sharpened, and ownership changes hands. Context that was current last quarter is stale this quarter, and without real-time visibility into these changes, an agent operating on stale assumptions makes confident mistakes faster than a human paying attention can catch them.

The difference between human onboarding and agent onboarding is what happens to the corrections: When a human gets corrected, the lesson stays mostly inside their head. When an agent gets corrected, the lesson has to be captured back into the context layer, otherwise the next agent starts from zero on the same problem. Without that loop, every agent in the organization is a fresh new hire, making the same mistakes the last one made.

The Agent Context Kit exists to close that loop. Agents update descriptions, save documents, and add structured properties as part of their normal operation, so each interaction enriches the context that the next agent inherits.

For the technical detail on how this works in practice, my colleague Nick Adams wrote a longer post on building autonomous data agents.

What this looks like in practice

The reason I can talk about this with any conviction is that we got it wrong inside the DataHub organization before we got it right.

About a year ago, I went on what people called a vibe-coding bender and built a couple of internal AI agents over a weekend. Lisa (named after the younger Simpson sibling) became our research and insight agent. She had access to Zendesk, Slack, Hubspot, and our Gong call recordings, and her job was to know our customers and prospects. Bart was our coding agent, scouting around the codebase, fixing bugs, and shipping changes for human review.

The first few rounds were terrible (and I build this stuff for a living):

- Lisa was giving great-sounding insights about customer behavior. The trouble was that her ingest pipeline had been quietly broken for days. She didn’t know about it. Nothing in her context told her that the dataset she was citing was stale. She had no trust signals attached to the data she was reading, so she did what an agent without trust signals always does. She answered with confidence.

- Bart was hallucinating in a different way. He was making decisions based on stale documentation about parts of our codebase that had moved on. The institutional knowledge he needed had never been captured in a form he could find, and there was no feedback loop catching the corrections he was generating along the way.

I remember the moment I thought to myself, eh, we’re too small for this. We don’t need DataHub as the context layer for our own agents. We’re a small team. How much could be hiding in there?

Turns out, plenty.

We moved both agents onto DataHub. They started reading from a single context layer, with trust signals attached to the data they were querying and institutional knowledge attached to the assets they were touching. They started writing memories back into that layer, so each interaction made the next one better. The shift wasn’t subtle.

If a company our size could be that wrong about how to organize context for its own agents, I’m genuinely worried about where the rest of the industry is going to end up before this becomes obvious to everyone.

Where this lives: the context platform

The three components above aren’t separate tools to procure. They’re surfaces of one underlying graph, and that graph is what we mean by a context platform, the infrastructure that makes context management possible at enterprise scale.

The way to think about a context platform is as the invisible plumbing for agent onboarding. It connects to every agentic AI surface area in the organization through Model Context Protocol (MCP), the open standard the industry is converging on for agent-to-data connections. Whether your agents live in Claude, ChatGPT, Cursor, internal apps, or somewhere we haven’t named yet, they draw from the same context layer through the same protocol. No team rebuilding its own RAG pipeline from scratch. No three different vector stores answering the same question three different ways. No agent operating on a private context layer that the next agent doesn’t inherit from.

This isn’t a hypothetical preference. In our 2026 State of Context Management Report, 93% of data leaders said they want context to live as shared infrastructure rather than team-specific tooling. The recognition is there. What isn’t there yet is the architectural muscle memory to actually build it that way.

The platform is the thing that makes onboarding AI agents repeatable. Every new agent the organization deploys inherits the institutional knowledge, the trust signals, and the feedback loop on day one, instead of starting from a blank context window. That’s the compounding advantage. The first agent costs you the work of building the context layer. Every subsequent agent runs on top of it.

Onboarding isn’t optional for new employees and it isn’t optional for agents. The companies treating it as a discipline now are building a foundation their next thousand agents will run on. The ones skipping it are deploying digital workers who act with confidence about questions they were never equipped to answer, and the cost of that is going to show up later, in production, in front of customers, at machine speed.

Future-proof your data catalog

DataHub transforms enterprise metadata management with AI-powered discovery, intelligent observability, and automated governance.

Explore DataHub Cloud

Take a self-guided product tour to see DataHub Cloud in action.

Join the DataHub open source community

Join our 14,000+ community members to collaborate with the data practitioners who are shaping the future of data and AI.

FAQs

Recommended Next Reads