What Is an AI Data Catalog?

Separating Real AI from Retrofitted Chatbots

Quick definition: AI data catalog

An AI data catalog uses artificial intelligence and machine learning to automate metadata management, enhance data discovery, and enable both humans and machines to find, understand, and trust data and AI assets. Unlike traditional catalogs that rely on manual documentation and keyword search, AI data catalogs can generate descriptions, classify sensitive data, discover relationships across data sources and AI models, and answer natural language questions about your data and AI estate.

But here’s what that definition misses: AI capabilities only work when built on the right architecture. A catalog with stale, batch-updated metadata can’t give accurate answers, no matter how sophisticated its AI models.

For context on how data catalogs have evolved and why architecture matters, see our guide to data catalog generations.

Every data catalog vendor now claims to be “AI-powered.” The label has become so ubiquitous that it’s nearly meaningless.

Here’s what typically happens: A legacy catalog built for human portal browsing adds a chatbot interface, markets itself as an “AI data catalog,” and calls it a day. Meanwhile, the underlying architecture remains unchanged—metadata still updates overnight, APIs remain afterthoughts, and AI assets like ML models and training datasets aren’t even in scope.

For data leaders evaluating catalog solutions or questioning whether their current tool’s AI features are actually delivering value, this creates a real problem. How do you separate genuine AI capabilities from marketing window dressing?

The answer lies in understanding two things: what AI can genuinely do inside a data catalog, and what architectural foundation it requires to work. Get either wrong, and you’re left with an expensive tool that promises intelligence but delivers frustration.

What AI actually does in a modern data catalog

The phrase “AI-powered” gets thrown around loosely. Let’s be specific about what AI genuinely delivers—and what it requires to function properly.

| AI Capability | What It Does | What It Requires |

| Conversational discovery | Natural language search across metadata | Real-time metadata; unified metadata graph |

| Automated documentation | Generates descriptions from context, queries, lineage | Column-level lineage; transformation logic access |

| Intelligent classification | Detects PII, tags sensitive data automatically | Column-level visibility; lineage for propagation |

| Relationship discovery | Identifies matching entities across datasets | Embedded metadata; graph-based retrieval |

| Anomaly detection | Spots freshness, volume, schema issues proactively | Continuous monitoring; ML pattern recognition |

Each capability sounds straightforward. The complexity (and the differentiation) lies in execution.

Conversational discovery

This is table stakes: Every catalog claiming AI capabilities offers some form of natural language search. Users ask questions like “Show me all tables with customer PII” or “What dashboards depend on the orders table?” and get answers without learning query syntax.

What separates useful conversational discovery from a frustrating chatbot experience is the quality and freshness of the underlying metadata. If the catalog only knows about yesterday’s state, or last week’s, the AI will confidently deliver outdated answers.

DataHub’s Ask DataHub provides conversational discovery across Slack, Teams, and the DataHub interface, with answers grounded in real-time metadata. The difference is immediately noticeable.

“We added Ask DataHub in our data support workflow and it has immediately lowered the friction to getting answers from our data. People ask more questions, learn more on their own, and jump in to help each other. It’s become a driver of adoption and collaboration.”

– Connell Donaghy, Senior Software Engineer, Chime

Automated documentation and enrichment

Manual documentation doesn’t scale. Data engineers know this viscerally; they’ve lived the reality of descriptions that go stale within weeks, datasets that never get documented because the experts are too busy using them, and onboarding processes that stretch months because there’s nothing to consult.

AI changes this by generating documentation automatically. But the quality of AI-generated descriptions depends entirely on what context the system has access to.

A sophisticated AI catalog doesn’t just look at table names and column headers. It analyzes transformation logic to understand what a dataset actually represents. It examines query patterns to see how people use the data. It traces lineage to understand where values originate and how they’re calculated.

The result: Descriptions that would take hours to write manually, generated in seconds—and descriptions that actually reflect how data is used, not just what someone intended when they created it.

The game-changer for documentation is when AI has access to lineage. Suddenly it’s not guessing what a field means based on its name—it knows, because it can see the transformations that created it.

Intelligent classification and tagging

Compliance requirements have shifted classification from nice-to-have to mandatory. GDPR, CCPA, and emerging AI regulations require precise visibility into where sensitive data lives, how it flows through transformations, and which downstream systems consume it.

AI catalogs automate this by detecting sensitive data types (like PII, financial information, health records) without manual review of every column. More importantly, they propagate these classifications through lineage. Tag a source column as containing PII, and every downstream dataset that inherits from it gets tagged automatically.

This only works with column-level lineage. Table-level lineage tells you that Table A feeds Table B, but it can’t tell you which specific fields contain sensitive data or where those fields flow. For compliance, that precision is everything.

Entity resolution and relationship discovery

Here’s where AI catalogs can deliver genuinely differentiated value—and where the architectural requirements become most demanding.

Consider a common scenario: Your organization has a foot traffic dataset with a column called post_code, a weather dataset with a column called zip_code, and a customer dataset with postal_code. These all represent the same thing, but keyword search will never connect them. A data analyst searching for “zip code data” might find one dataset and completely miss the others.

Sophisticated AI catalogs solve this through entity resolution—automatically identifying columns across datasets that represent the same underlying entity, even when they have different names.

How does this work in practice? Several techniques, in increasing order of sophistication:

- Vector similarity on embedded metadata: The catalog embeds column descriptions, sample values, and context into vectors. Columns with similar embeddings likely represent the same entity. This is fast and doesn’t require hitting LLM APIs repeatedly, but it depends heavily on how well the embeddings capture semantic meaning.

- LLM-based matching: The catalog prompts a language model with details about two columns—names, descriptions, sample values—and asks whether they represent the same identifier. This is more accurate but more expensive, requiring API calls for each comparison.

- Graph-enhanced retrieval: This is where things get interesting. Once the catalog has identified that zip_code and post_code represent the same entity, that relationship is stored in the graph. When a new dataset arrives with a column called ZP containing zip code data, the AI doesn’t just compare it to zip_code—it pulls in context from all previously connected columns. The more relationships the system learns, the better it gets at identifying new ones.

Entity resolution is where the knowledge graph architecture really pays off. Every relationship you discover makes the next discovery easier. The system compounds its own intelligence over time.

The business impact is substantial. Instead of finding one dataset when searching for Starbucks-related data, analysts discover that foot traffic data mentions Starbucks directly via ticker symbol, and that weather data connects to Starbucks locations through shared postal codes. Two datasets become available for analysis instead of one, relationships that might never have been discovered through manual exploration.

Anomaly detection and quality monitoring

Traditional data quality approaches are reactive: Something breaks, someone notices, a ticket gets filed, and the data team investigates. By the time the issue is resolved, downstream dashboards have been serving bad data for hours or days.

AI-powered observability flips this to proactive detection. Machine learning models learn normal patterns—expected freshness intervals, typical volume ranges, stable schema structures—and alert when reality diverges.

This isn’t just “send an alert when data is late.” Sophisticated anomaly detection understands context. A 20% drop in volume might be alarming for one dataset and completely normal for another that has weekly seasonality. The AI learns these patterns and calibrates alerts accordingly.

The key requirement: Observability and discovery must be unified. Users shouldn’t have to check one tool to find data and another to know if it’s trustworthy. In a modern AI catalog, quality metrics, freshness SLAs, and incident history appear in the same view as metadata and lineage.

Why most “AI data catalogs” fall short

Understanding what AI can do is only half the picture. The harder question: Why do so many “AI data catalogs” fail to deliver on their promises?

The chatbot problem

Adding a conversational interface to a portal doesn’t make it an AI catalog. If the underlying metadata is stale, incomplete, and manually maintained, the chatbot has nothing useful to work with.

This is the “garbage in, garbage out” problem at the metadata layer. You can bolt the most sophisticated language model onto a Gen 2 catalog, and it will still give wrong answers—because the metadata it’s querying doesn’t reflect current reality.

Architectural requirements for AI to actually work

The gap between AI features and AI value comes down to architecture. Here’s what each capability actually requires:

| AI Capability | Required Foundation | Why Legacy Catalogs Struggle |

| Accurate conversational answers | Real-time metadata (not batch) | Nightly syncs mean today’s questions get yesterday’s answers |

| Relationship discovery across datasets | Column-level lineage + unified metadata graph | Table-level lineage can’t identify which columns match |

| AI asset governance | Unified data + AI scope | Data-only catalogs don’t track models, features, training data |

| Programmatic AI agent access | API-first architecture | Portal-first tools treat APIs as afterthoughts |

These aren’t feature gaps that can be closed with a software update. They’re architectural constraints baked into the foundation of how the catalog was built.

The Gen 2 trap

The data catalog market has evolved through distinct generations. Gen 1 was spreadsheets and institutional knowledge. Gen 2 brought centralized portals for searching and documenting data—tools like Alation, Collibra, and Informatica that emerged around 2015 and genuinely solved the chaos of Gen 1.

But Gen 2 catalogs were built on assumptions that no longer hold:

- Humans are the primary users: Portals first, APIs as afterthoughts. The catalog is a destination you visit, not infrastructure your systems depend on.

- Metadata changes slowly: Batch ingestion on nightly schedules is acceptable.

- Manual curation is sustainable: Data stewards will document datasets and maintain tags as things change.

- Tables and columns are the scope: ML models, features, and training datasets aren’t in the picture.

Gen 3 catalogs—true AI data catalogs—are architecturally different. They serve humans and machines. They process metadata in real-time. They automate what Gen 2 expected humans to do. They cover data and AI assets in a unified graph.

For the complete breakdown of catalog generations and what defines each, see our guide: What is a Data Catalog?

Choosing a Gen 2 catalog today, even one with AI features bolted on, means planning another migration in two to three years when its limitations become blocking.

What a true AI data catalog delivers

DataHub Cloud is built as a Gen 3 AI data catalog from the ground up—not retrofitted from a legacy portal architecture. Here’s what that means in practice.

1. Discovery for humans and machines

For humans: Ask DataHub provides conversational search directly in Slack, Teams, or the DataHub interface. Ask questions in plain English, get answers grounded in real-time metadata with citations linking back to source assets.

For machines: GraphQL and REST APIs enable programmatic access at machine speed. CI/CD pipelines can validate data contracts before deployment, embedding governance directly into data workflows. Automation scripts can query lineage before making changes. AI agents can discover datasets, check compliance, and record their actions.

This dual-audience architecture isn’t a nice-to-have—it’s foundational for where data management is heading. As AI agents become more prevalent, the catalog becomes infrastructure they depend on for decision-making.

DataHub’s support for Model Context Protocol (MCP) takes this further, enabling AI assistants to query metadata conversationally through emerging standards for agent integration.

2. Unified data + AI asset coverage

Traditional catalogs handle tables, views, dashboards, and pipelines. That’s necessary but no longer sufficient.

DataHub catalogs AI assets alongside traditional data: ML models, features, vector databases, notebooks, LLM pipelines. A single lineage graph connects raw data through transformations to model training to predictions to downstream applications.

This matters for several reasons:

- Reproducibility: Track which snapshot of training data fed which model version.

- Debugging: When model performance degrades, trace back through data lineage to understand whether data drift or model changes are responsible.

- Compliance: When regulators ask “what data trained this model?”, the answer is documented automatically rather than reconstructed through forensic investigation.

Without unified data + AI coverage, organizations manage these assets in separate tools with separate lineage graphs that don’t connect. Data governance gaps emerge at the boundaries. Compliance risks multiply as AI scales.

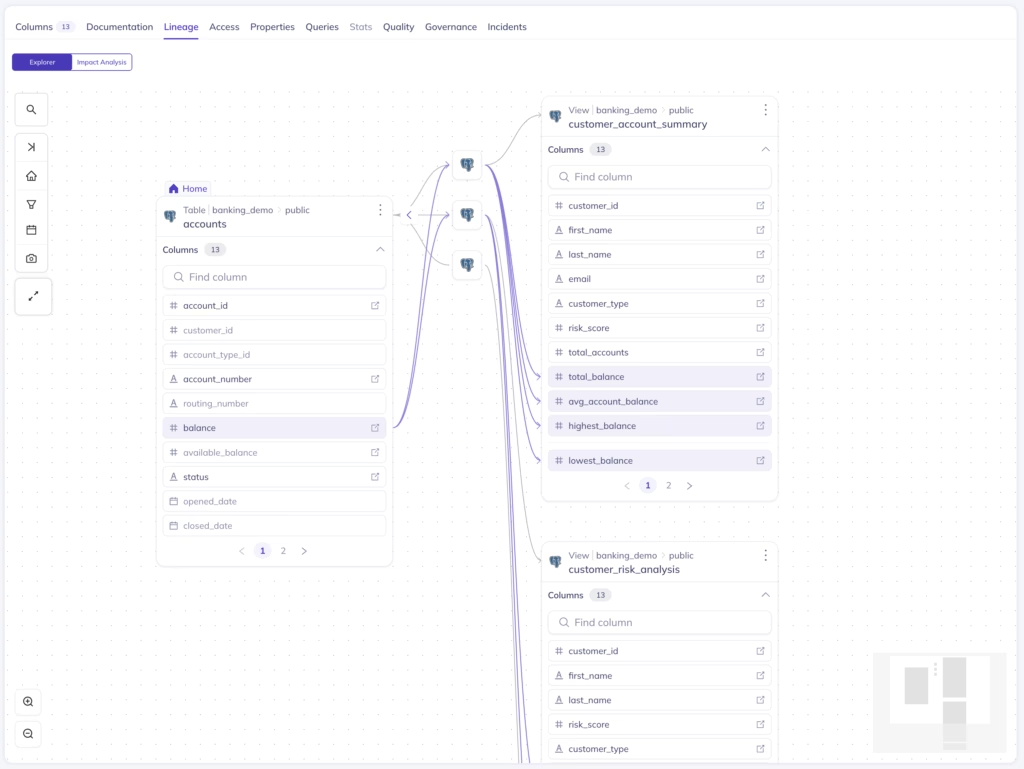

3. Column-level lineage as AI foundation

Table-level lineage tells you that Table A feeds Table B. Column-level lineage tells you exactly which fields flow through which transformations—critical for compliance (“which columns contain PII and where do they flow?”) and impact analysis (“what breaks if I change this specific field?”).

DataHub traces column-level lineage across the entire data supply chain: from Kafka events through Spark transformations to Snowflake tables to Looker dashboards to SageMaker models. Real-time updates mean lineage reflects current state, not yesterday’s batch run.

“My favorite part about DataHub is the lineage because this is one really easy way of connecting the producers to the consumers. Now the producers know who is using their data. Consumers know where the data is coming from. And it is easier to have accountability mechanisms.”

– Sherin Thomas, Software Engineer, Chime

4. Observability and governance unified with discovery

In legacy architectures, discovery tells you where data lives. Observability tells you whether it’s healthy. Governance tells you whether it’s compliant. Three separate tools, three separate contexts to maintain, three places where things fall through the cracks.

DataHub unifies these concerns. Users don’t just find data; they see real-time quality metrics, freshness SLAs, and incident history in the same view. Governance policies and data access controls apply automatically based on detected sensitivity. Quality improvements align with usage patterns because the system knows which datasets matter most.

How to evaluate AI data catalog solutions

When evaluating AI data catalogs, the questions that matter go beyond feature checklists. Here’s how to separate genuine capabilities from marketing claims:

| Question to Ask | Why It Matters | What DataHub Delivers |

| How quickly do metadata changes appear? | AI needs current data to give accurate answers. Batch updates mean the AI is always working with stale information. | Stream-processing architecture (Kafka) reflects changes in seconds, not overnight. |

| Can you trace column-level lineage across platforms? | Entity resolution, compliance, and impact analysis all require column-level granularity. Table-level isn’t sufficient. | Column-level lineage across 100+ integrations including Snowflake, Databricks, dbt, Airflow, Tableau, and SageMaker. |

| Does it cover AI/ML assets or just traditional data? | You can’t govern AI in production with a data-only catalog. Models, features, and training data need to be tracked with the same rigor as tables. | Unified data + AI asset catalog with consistent metadata schema, lineage, and governance across both. |

| Are APIs designed for machine consumption? | Determines whether automation scripts, CI/CD pipelines, and AI agents can use the catalog programmatically. | API-first architecture with GraphQL/REST designed for machine access, plus MCP support for AI agent integration. |

| Is there an open-source foundation? | Reduces vendor lock-in risk and ensures platform evolution aligns with industry standards rather than a single vendor’s roadmap. | Built on the most popular open-source data catalog (14K+ community members). |

The most telling question: What happens when I ask about something that changed this morning? If the answer is “you’ll see it tomorrow,” that’s a Gen 2 tool with AI features bolted on.

Ready to see what a true AI data catalog can do?

The gap between retrofitted AI features and genuine AI capabilities isn’t about marketing—it’s about architecture. If your current catalog struggles to keep pace with AI initiatives, decentralized data architectures, or the shift to self-service, it may be time to see what’s possible with infrastructure built for where data management is heading.

DataHub Cloud delivers Gen 3 AI data catalog capabilities today, while evolving toward Gen 4 (context platforms where AI agents autonomously manage assets). Organizations like Block and Apple are already leveraging this architecture at scale.

Check out our product demos or explore the product to see the difference.

Future-proof your data catalog

DataHub transforms enterprise metadata management with AI-powered discovery, intelligent observability, and automated governance.

Explore DataHub Cloud

Take a self-guided product tour to see DataHub Cloud in action.

Join the DataHub open source community

Join our 14,000+ community members to collaborate with the data practitioners who are shaping the future of data and AI.

Originally published June 30, 2024, updated February 3, 2026.

FAQs

Recommended Next Reads