Context Platform ROI: The Real Cost (and the Hidden One You’re Already Paying)

Quick definition: What is a context platform?

A context platform is the infrastructure layer that unifies metadata, lineage, quality signals, ownership, documentation, and institutional knowledge across existing enterprise data systems. It’s the foundation that makes context management (the practice of capturing and operationalizing context at scale) possible for humans and AI agents.

Most organizations already pay the cost of a context platform. They just pay it in pieces, spread across data team overhead, failed AI projects, and audit scrambles. None of it shows up on the P&L as a line called “context,” but it adds up to real dollars every year.

The 2026 IDC Business Value Solution Brief on DataHub Cloud makes that distributed spend visible across five measurable categories of return. The framework is useful whether or not DataHub is on your shortlist. It gives you a structured way to find the numbers that build a credible business case in any context platform evaluation.

The five categories of measurable returns

IDC interviewed organizations using DataHub Cloud as a context platform. Participant overview:

- Scale: Ranged from medium-sized businesses to very large enterprises

- Industries: ecommerce, financial services, healthcare, IT services, and software

- Revenue: Average annual revenue of $7.01B and median revenue of $2.80B

The per-organization dollar figures below come from IDC’s benchmark sample. Your absolute numbers will land elsewhere, but the percentage improvements tend to transfer because the underlying mechanisms, centralized search, mapped lineage, clear ownership, and visible quality signals, work the same way at any scale.

1. Productivity gains for data producers and consumers

The hidden cost: Before a context platform, finding the right data is a manual process: Analysts ping engineers in Slack. Engineers pause roadmap work to answer “Is this table fresh?” questions. Nobody tracks the cost directly, but it surfaces as headcount pressure, missed analytics timelines, and delivery teams that can’t scale without hiring.

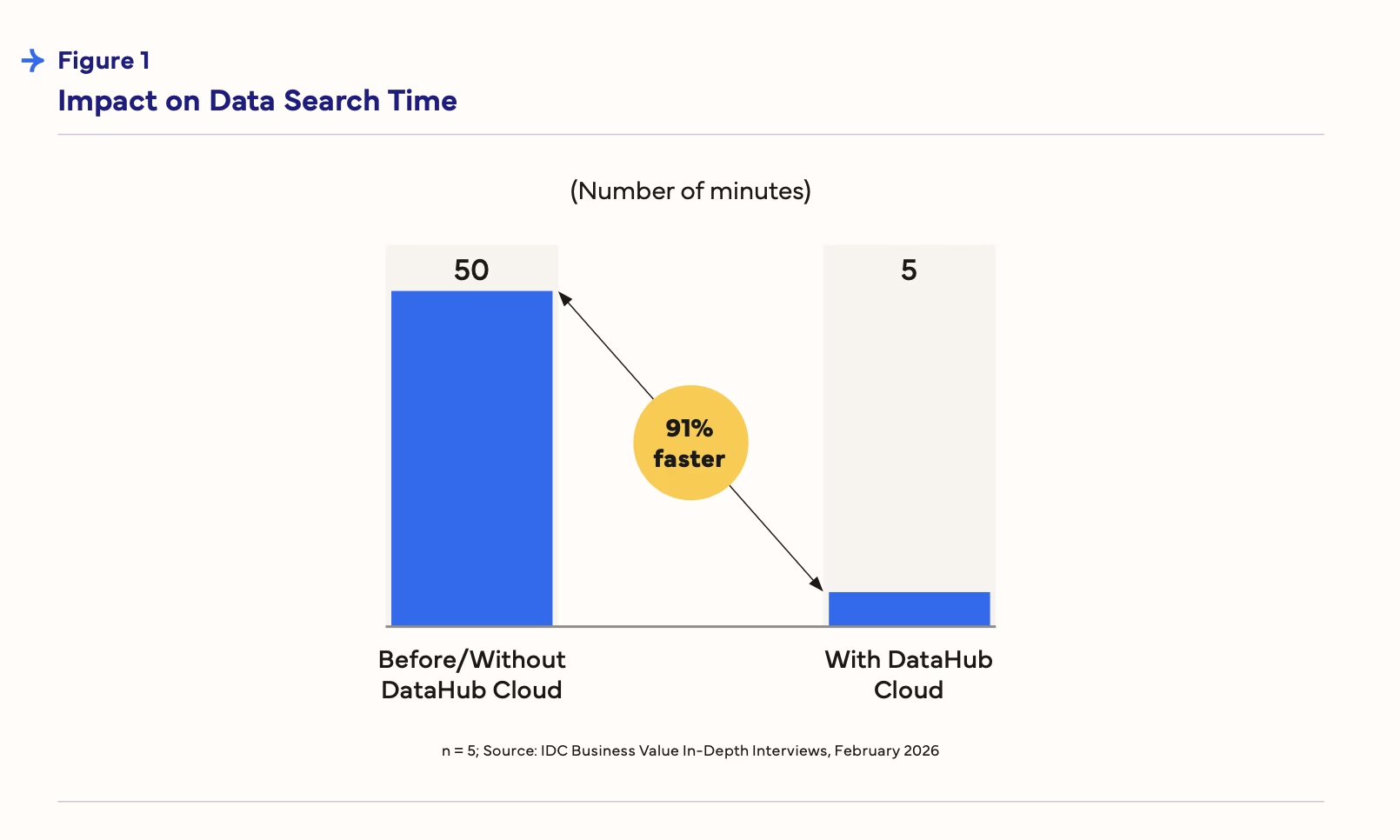

What IDC measured: The headline number is search time. It fell from 50 minutes to five, a 91% reduction, with success rates climbing from 22% to 69% over the same period. Those gains ran downhill through every team that depends on data.

- Time to find and validate a data set dropped 82%

- Data engineering teams became 17% more productive, a gain IDC valued at $2.02M per organization on average

- Analytics teams gained another 18%, worth $914,400

- And IT, the absorbing layer for everyone’s failed searches, saw support load drop 58%

Every developer is saving at least two hours a week, which adds up to thousands of hours per month across the organization.

— IDC The Business Value of DataHub Cloud, DataHub customer

The mechanism: A context platform puts searchable metadata, lineage, and ownership on one surface, so producers document once and consumers find answers without escalating to the team that built the data. The downstream effect is a shift in where data team capacity goes, away from reactive maintenance work and toward the high-impact initiatives that actually move the roadmap forward.

Where DataHub fits: DataHub works both sides of the producer/consumer divide. Automated metadata ingestion pulls context from the major cloud data platforms, BI tools, and orchestration layers into a single catalog and AI-generated documentation closes metadata completeness gaps in weeks rather than years.

For consumers, Ask DataHub turns that catalog into a natural language surface, so discovery becomes a question rather than an escalation. See more in our guide to data discovery.

2. Reduce the cost and impact of data incidents

The hidden cost: Broken pipelines ship bad numbers to executive dashboards and poisoned training data to ML models. Engineers spend hours on root-cause analysis that should take minutes. Incidents rarely get costed, but they consume team capacity and erode downstream trust in the data platform itself.

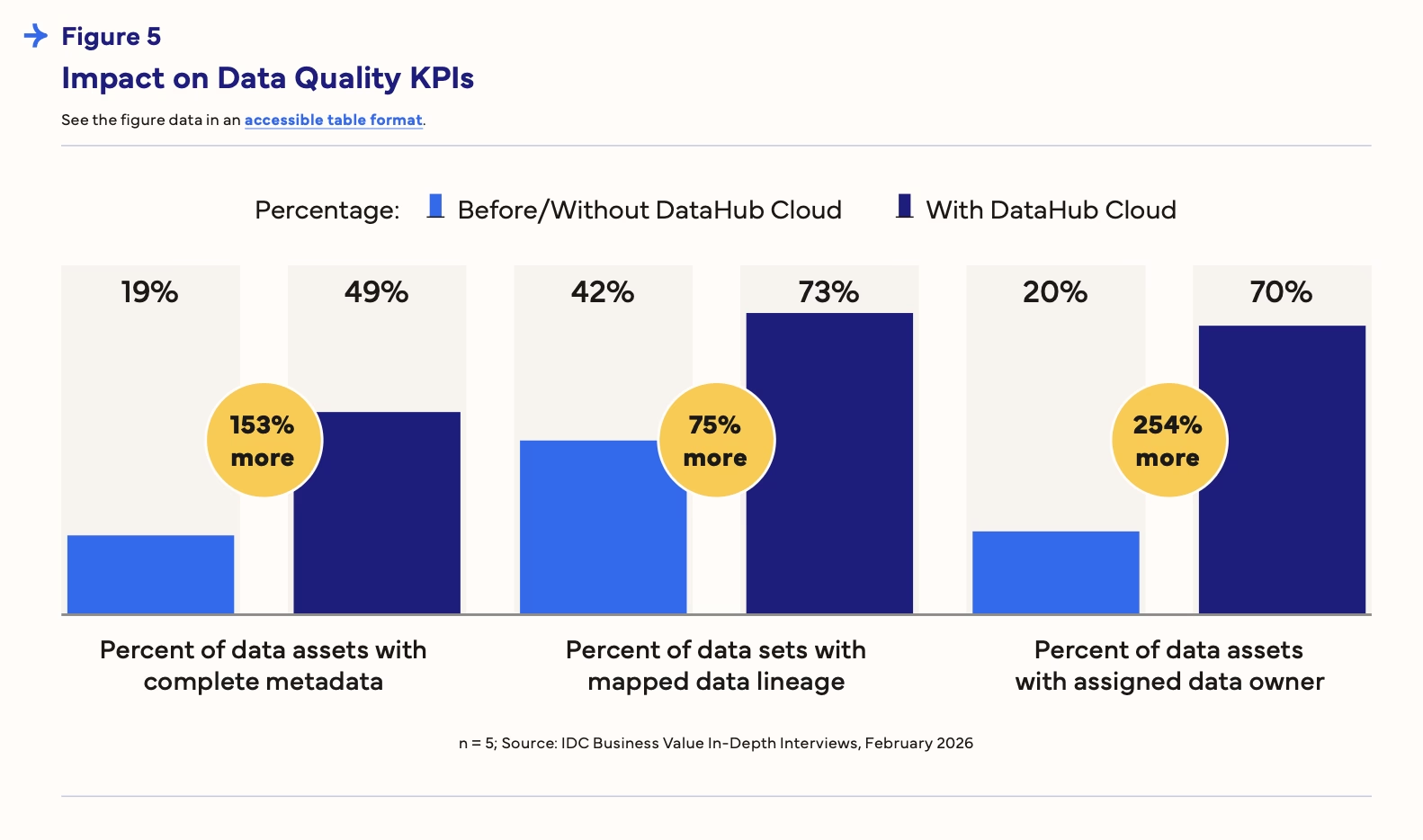

What IDC measured: Data quality issues dropped across the board:

- 42% fewer overall

- 48% fewer timeliness issues

- 56% fewer completeness issues

Those gains traced back to improvements in the scaffolding underneath:

- Metadata completeness rose 153%

- Mapped lineage coverage rose 75%

Governance teams, typically staffed to manage that scaffolding manually, gained 20% efficiency worth $976,700 per organization on average.

The mechanism: With quality signals, lineage, and ownership alongside the data assets they describe, consumers know whether to trust a table before they query it, engineers trace incident impact without opening a Slack thread, and incidents route to the right person on the first try.

Where DataHub fits: Smart Assertions learn historical patterns and set thresholds without manual configuration, feeding a Data Health Dashboard where what’s broken, at risk, and healthy shows on a single surface. Pipeline circuit breakers close the loop by halting downstream jobs when upstream checks fail, so bad data doesn’t reach AI agents or BI users.

3. Accelerate AI to production

The hidden cost: AI pilots get funded, then stall. They don’t usually fail because the model is wrong. They fail because training data is incomplete, lineage can’t be traced end-to-end, and ML teams can’t demonstrate that the data is model-ready. The sunk cost is months of ML engineering on projects that never reach production.

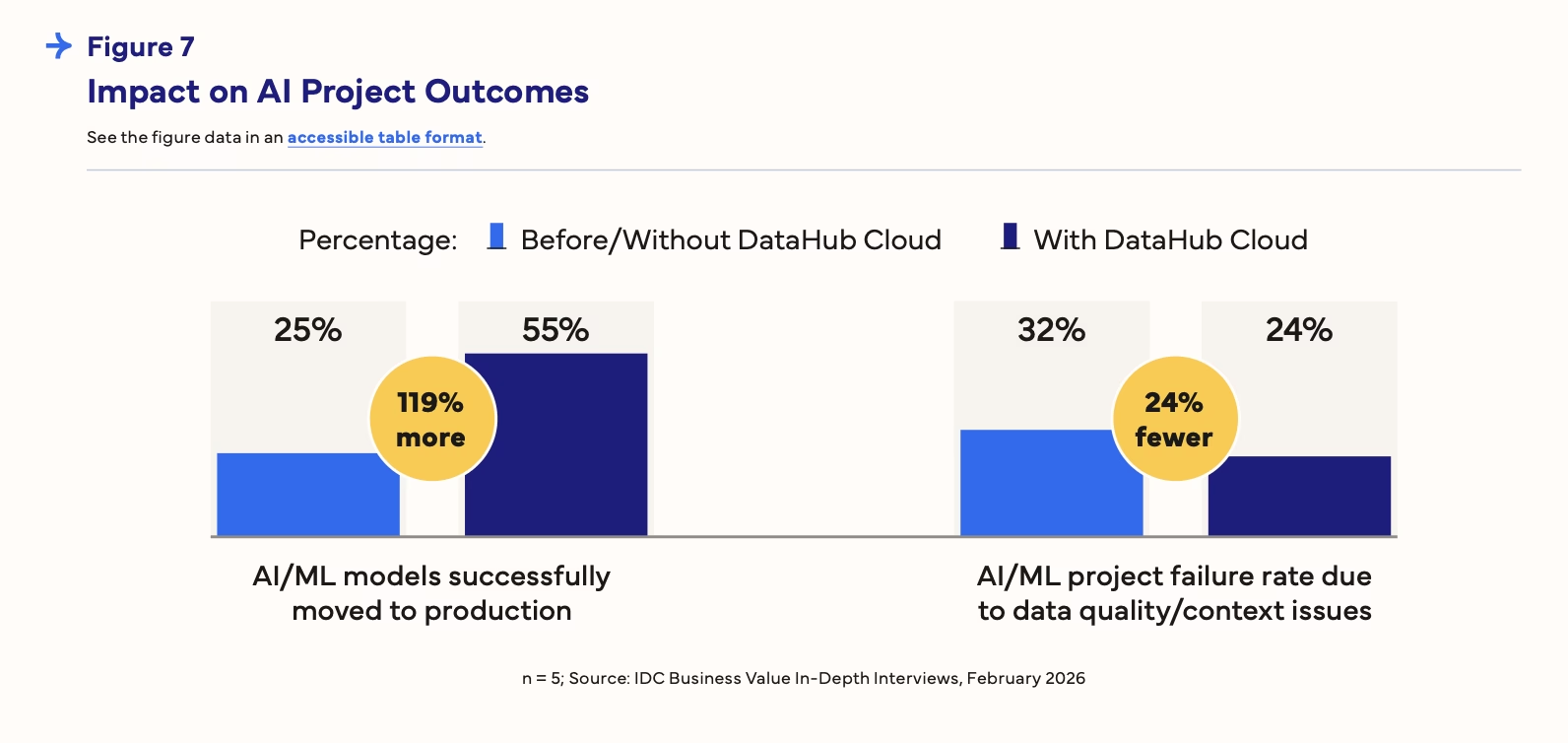

What IDC measured: Interviewed organizations moved 119% more AI/ML models into production, lifting success rates from 25% to 55%, while project failure rates tied to data quality and context issues dropped 24%.

IDC flagged the category as carrying value “beyond the efficiencies gained in developing and delivering these initiatives,” since shipped AI programs drive revenue directly rather than just reclaiming engineering hours. That’s where competitive advantage actually gets built, not in the model itself but in the data context feeding it.

The mechanism: ML teams see upstream data quality, lineage, and ownership before a model reaches production, and training data clears its quality thresholds before deployment rather than after the model starts misbehaving.

Where DataHub fits: Before training starts, the AI Fitness Score gives ML teams a readiness signal on their data, whether they’re building predictive models or generative AI applications.

As development progresses, lineage for ML pipelines traces how data flows into models, so upstream quality issues surface early. After rollout, real-time monitors verify that production training data continues to meet thresholds.

4. Reduce risk of failing compliance audits

The hidden cost: Audits consume compliance team cycles and pull engineers off roadmap work, with every new regulation kicking off another scramble cycle. When audits fail, the cost is fines, delayed product launches, or lost certifications that customers require as a condition of doing business.

What IDC measured: DataHub Cloud customers reported 48% fewer data-related outages and resolved them 58% faster, because the lineage and ownership visibility that supports audit readiness also supports incident response.

On the team side, compliance gained 8% efficiency worth $431,200 per organization, and data pipeline development teams gained 13% productivity worth $3.52M.

The mechanism: Centralized metadata, assigned ownership, and mapped lineage across existing systems together create a clear record of how data is sourced, transformed, and consumed, which turns audit response into a query rather than a fire drill.

Where DataHub fits: DataHub’s compliance forms and workflow engine route classification, retention, and attestation requirements to the right owners and track completion through to sign-off.

Metadata tests enforce data governance standards continuously rather than at audit time, and data contracts bundle freshness, volume, schema, and column assertions into enforceable agreements that validate on schedule.

5. Reduce data costs

The hidden cost: Organizations accumulate redundant tables, orphaned data sets, and pipelines that no downstream consumer actually uses. Storage and query bills grow, and nobody retires anything, because nobody has visibility into what’s actually in use.

What IDC measured: On average, interviewed organizations required 8% less storage, with Snowflake-specific reductions reaching up to 25%.

DataHub Cloud has helped us save on data storage costs by around 20–25%, which is about $250,000 to $300,000 per year.

— IDC The Business Value of DataHub Cloud, DataHub customer

Another customer identified 10% of data that wasn’t being used, saving $40,000 annually.

The mechanism: Usage statistics combined with lineage reveal which tables have no downstream consumers, ownership assignment makes someone accountable for retirement, and deprecation workflows turn that visibility into action.

Where DataHub fits: Usage and lineage analytics identify redundant and orphaned assets, and deprecation workflows tag them for retirement, notify owners, and track archival, turning cost visibility into actual cost reduction rather than another report that nobody acts on.

How to build the business case for your organization

IDC’s numbers are benchmarks, not forecasts. The interviewed sample averaged $7B in revenue and 126,380 employees. Your absolute numbers will differ, but the improvement rates tend to transfer reasonably across organization sizes because the problems (underlying inefficiencies, search friction, incident response overhead, AI readiness gaps, audit scramble, orphaned data) exist at every scale of organization.

A workable method:

- Use the five categories as a diagnostic for business outcomes: Which are costing you the most today? For most data-mature organizations, the first two categories dominate. For organizations under board mandate to accelerate AI adoption or pass new audits, the middle three take priority.

- Baseline your current spend in each relevant category:

- What does a typical data search cost in engineer-hours?

- What’s your data incident rate, mean time to resolve, and downstream business cost per incident?

- What percentage of AI experiments reach production?

- How much of your storage bill is unused or redundant?

- Apply IDC’s improvement rates as a conservative estimate: A 91% reduction in search time is not a stretch number. It’s an average across the interviewed organizations.

- Read the full IDC Business Value Solution Brief on DataHub Cloud for the methodology and category-by-category detail.

- Run your own numbers with the DataHub Cloud Value Estimator for a live, calculator-driven first pass.

Future-proof your data catalog

DataHub transforms enterprise metadata management with AI-powered discovery, intelligent observability, and automated governance.

Explore DataHub Cloud

Take a self-guided product tour to see DataHub Cloud in action.

Join the DataHub open source community

Join our 14,000+ community members to collaborate with the data practitioners who are shaping the future of data and AI.

FAQs

Recommended Next Reads