The Data Engineer’s Guide to Context Engineering

Quick definition: Context engineering

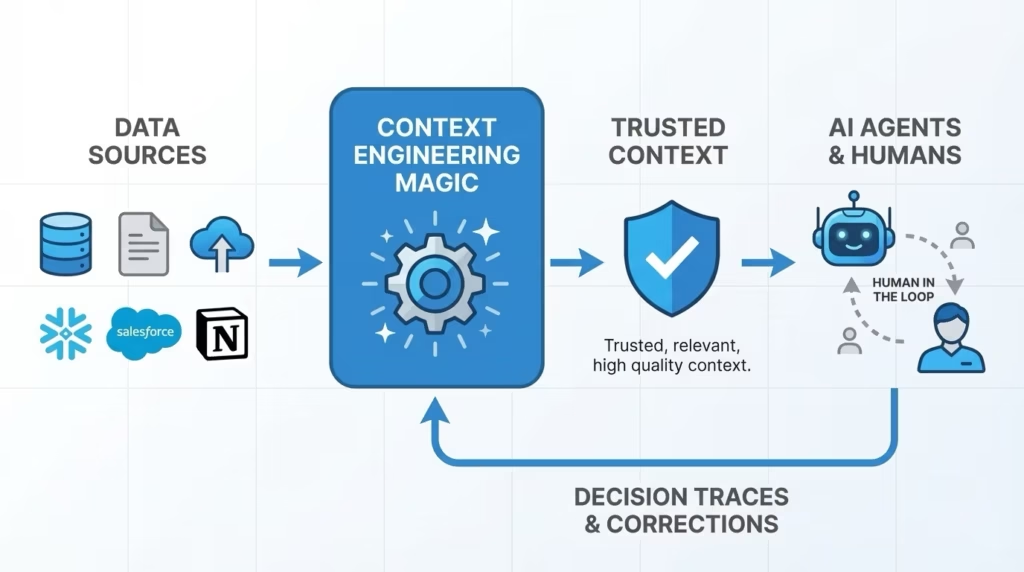

Context engineering is the discipline of designing and managing the authoritative information AI agents need to make accurate, reliable decisions. It goes way beyond writing prompts; context engineering is a systems discipline: Architecting how data, instructions, tools, memory, and governance rules flow to an AI model at inference time.

For data engineers, it represents the natural evolution of their work as the primary consumers of enterprise data shift from human analysts to AI agents.

As data engineers, we’ve spent years building the infrastructure that makes enterprise data usable: Metadata catalogs, lineage tracking, data quality monitoring, access controls, and governance frameworks—all the systems that help humans to easily find, trust, and act on data.

But now, the primary consumers of that data are changing: AI agents query your data platforms, making decisions based on what they find, and taking action on behalf of your organization.

And these agents need something beyond raw data access. They need relevant context: The semantic understanding, business logic, quality signals, and governance guardrails that turn data into trustworthy information. Context engineering is the discipline that makes this possible.

From serving humans to agents

For most of data engineering’s history, the end user of data insights was human: An analyst running a query, a business leader reviewing a dashboard, a data scientist building a model. The data engineer’s job was to make data discoverable, reliable, and governed so these humans could use the data to make mission-critical decisions.

Agentic AI changes the consumption pattern fundamentally. When an AI agent needs to answer a business question, it won’t open a dashboard and apply human judgment. Instead, it will make use of the data and context it has access to and act on it directly. No human in the loop required. If that context is incomplete, stale, ungoverned, semantically ambiguous, or cluttered with irrelevant information, the agent won’t pause and ask a colleague for clarification. It will produce a confident, but wrong, answer.

This is precisely why context engineering is emerging as a distinct discipline, extending well beyond a typical RAG implementation.

Retrieval-augmented generation (RAG) addresses one narrow piece of the problem: retrieving relevant documents and placing them into the model’s context window. But RAG is fundamentally about the mechanics of retrieval—the “how.”

Context engineering, by contrast, expands to include principles: deciding what information, tools, memory, and constraints the agent sees so that it behaves in ways consistent with enterprise goals, policies, and workflows.

To be specific, enterprise agents deployed to production need:

- Disambiguated business definitions that span departments

- Access to structured and unstructured data sources across the enterprise estate

- Governance rules that determine what the agent can and cannot access

- Semantic relationships that connect data across systems, including external data sources

- Freshness guarantees that prevent stale data sources from driving decisions

- Understanding of past decisions and exceptional cases when human intervention was necessary

- Quality signals that indicate how trustworthy a data source is right now

Here’s the good news: Data engineering teams have traditionally been responsible for curating this type of context to help the other humans at their organizations understand and use their data.

Same skills, new consumerHow the data engineering workflow maps to context engineeringThe infrastructure skills are the same. The consumer and the stakes have changed. | ||

| DATA ENGINEERING FOR HUMANS… | CONTEXT ENGINEERING FOR AGENTS… | |

| BUILD | Data catalog Organize data assets with lineage, quality scores, ownership, and business definitions | Context platform Extend metadata with semantic understanding, business logic, decision history, human corrections, and tool definitions |

| GOVERN | Access and quality controls Enforce who can see what, validate freshness, and monitor data health | Agent governance Enforce what agents can access, audit their decisions, and monitor context quality |

| CONSUME | Analyst queries the data Human applies judgment, asks follow-ups, and interprets ambiguity | Agent retrieves data and context No human judgment at query time—agent acts on the context it needs to solve the problem |

| OUTCOME | Informed decision Human context fills the gaps between data and meaning | Autonomous action Context quality determines outcome quality. Human-in-the-loop for only key actions |

From managing metadata to context

Until now, metadata has been thought of as what humans need to understand an enterprise’s data. It answers questions like: What is this data for? Where did it come from? When was it last updated? What does this column mean? Who owns it? Is it production-ready? Does it contain sensitive information?

And context is what autonomous agents need to know about the enterprise and its data to make high quality decisions to accomplish tasks consistently and reliably. Context answers the same questions, but is delivered in a format that machines can use to take action autonomously.

Context = structured metadata + unstructured knowledge + semantic understanding

Your metadata catalog already contains much of this context. Enterprise knowledge is captured in your business glossary, table and column descriptions, data lineage, quality checks, ownership information, and access policies—the raw materials of context engineering. To serve agents effectively, we don’t need to start from scratch. We need to continue to invest in the curated enterprise context we already have: our metadata.

When people talk about context engineering as if it’s a brand new discipline, they’re ignoring the fact that data engineers have been solving the hardest part of this problem for years. The institutional knowledge encoded in your metadata catalog, your lineage graphs, your data quality rules—that’s the foundation context engineering needs to work at scale.

Why data engineers should drive the discussion around context

The most effective AI teams combine distinct expertise:

- Deep understanding of LLM capabilities

- Knowledge of enterprise data semantics and infrastructure

- Experience with production systems at scale

- Understanding of compliance and governance requirements

Data engineers bring critical capabilities to this mix. And yet most context engineering content speaks exclusively to AI engineers building agents, as if enterprise context materializes out of thin air.

Consider what happens when context engineering lacks data engineering rigor. An agent retrieves a customer revenue figure, but doesn’t know that the finance team’s “customer” definition differs from the product team’s. It joins data from two systems without understanding that one uses fiscal quarters and the other uses calendar quarters. It surfaces a metric that was deprecated three months ago because nobody connected the data quality monitoring system to the context layer.

These are not AI engineering problems. These are data engineering problems manifesting in a new environment. And they require data engineering expertise to solve.

Context engineering isn’t just an AI challenge. The hardest problems—disambiguating business logic, ensuring data freshness, governing access—are the problems data engineers have solved before. We need both skill sets at the table.

As context engineering practices mature into enterprise context management, the experience of building scalable, governed data platforms positions data engineers to contribute meaningfully to their organization’s AI initiatives. Data engineers don’t need to become an AI researcher or start from scratch; they need to extend the infrastructure and systems expertise they’ve already developed to serve agentic consumers alongside human ones.

Six ways data engineer skills already transfer to context engineering

1. You already understand enterprise data semantics

You’ve spent years building the mental models of how enterprise data actually works. You have the tribal knowledge, now you just need to write it down. This institutional knowledge is the foundation of high-quality context.

When an agent asks “show me our top customers,” you know which customer definition to use (billing entity vs. product user vs. support account), what data quality issues exist in each source, which joins are safe and which will produce misleading results, and what the business actually means by “top” (revenue? growth? strategic importance?).

An AI engineer building the agent might design an elegant retrieval system. But without the semantic knowledge you carry, the agent retrieves the wrong “customer” table and produces a confidently incorrect answer. Effective context engineering needs this domain expertise encoded into the system so agents inherit the judgment you’ve built over years.

2. You’ve built the infrastructure that context engineering runs on

Context engineering doesn’t start from scratch. It builds on infrastructure you’ve already created:

- Databases, data warehouses, data lakes, and service APIs enable access to raw data

- Metadata catalogs become the foundation for context graphs

- Lineage tracking extends to trace context provenance for agents

- Data quality monitoring evolves into context quality monitoring

The infrastructure work you’ve already done creates the foundation that enables context engineering at scale. For a deeper look at how this infrastructure evolves, read how DataHub enables context management.

3. You think in pipelines and systems

Anthropic‘s engineering team describes context as “a finite resource with diminishing marginal returns.” As the volume of context grows, an agent’s ability to use that context effectively degrades. This means the real engineering challenge isn’t getting more context into the window. It’s getting the right context in the right order at the right time.

Sound familiar? This is the same optimization problem you solve in data pipelines every day:

- DAG design principles apply to agent task chains and multi-step reasoning flows

- Dependency management becomes managing context dependencies across agent steps

- Data freshness service-level agreements (SLAs) become context freshness guarantees

- Data observability extends to context observability

Your systems thinking (understanding how information flows through complex architectures, where bottlenecks emerge, how to monitor and debug at scale) is essential for building context systems that work reliably in production.

4. You understand governance as a first principle

As agentic AI moves into production, governance becomes the differentiator between prototypes and enterprise-grade systems.

Your experience here is increasingly valuable:

- Compliance requirements for data access extend to agent access patterns

- Monitoring extends from data systems to agent behavior: logging decisions and inspecting for policy violations before they surface as incidents

- Audit trails you’ve implemented for human users apply to agent decision-making

- Data retention policies govern context stored for agents

- Privacy regulations (GDPR, CCPA) require the same rigor for agentic access as for human access (knowing where sensitive data is matters!)

Add your experience managing data and AI governance, and helping manage access to sensitive data by AI models. As context management matures into enterprise infrastructure, governance expertise becomes essential. Your experience building compliant, auditable data systems positions you to ensure context systems meet the same standards.

5. You’ve debugged production data systems—and context systems break the same way

When context failures happen in production, the failure modes look remarkably familiar:

- Context staleness mirrors the data refresh problems you’ve debugged. An agent retrieves outdated data or documentation while using fresh transactional data and produces a recommendation based on policies that changed last quarter.

- Context inconsistency resembles joining across mismatched keys. Two context sources define “active user” differently, and the agent combines them without recognizing the conflict.

Context origins tracing is similar to lineage and cataloging. An agent makes a flawed recommendation, and without knowing which context sources drove that decision (and where those sources originated) you can’t diagnose or fix it.

| Data engineering failure | Context engineering equivalent | Why it breaks agents |

| Stale data from delayed refresh | Stale context: agent retrieves outdated documentation while using fresh transactional data | Agent recommends based on policies or definitions that changed last quarter |

| Key mismatches across joined tables | Semantic conflicts: two context sources define the same term differently | Agent combines “active user” figures from two systems using incompatible definitions |

| Incomplete lineage and cataloging | Context origins tracing: no record of which context sources drove a decision or where they originated | Agent makes a flawed recommendation and you can’t diagnose or fix it without knowing what context it used and where it came from |

When an agent produces incorrect results because it retrieved stale documentation while using fresh data, you’ll recognize this as a data freshness problem. Your debugging instincts and production experience transfer directly.

6. Your domain knowledge enables context curation

Building the context graph requires answering organizational questions that demand deep institutional knowledge:

- Which documentation is authoritative when multiple sources say different things?

- How do you semantically link datasets to business glossary terms?

- What implicit knowledge do subject matter experts carry that should be encoded into the context layer?

- Which conversation threads contain critical context that should be preserved?

These aren’t questions an AI engineer new to the organization can answer. They require the relationships, tribal knowledge, and understanding of trusted data sources that you’ve developed working across teams. This makes you effective at context curation, which is the most human and least automatable part of context engineering.

Context curation is where domain expertise meets infrastructure skills. You can build the most sophisticated retrieval system in the world, but if you haven’t curated the right context—if you don’t know which sources are trusted, which definitions are canonical, which knowledge is implicit—the system won’t deliver reliable results.

This is upskilling, not starting over

The path from data engineering to context engineering isn’t a career reset. It’s a career extension. You’re not abandoning the skills you’ve built; you’re applying them to increasingly complex tasks that the market is just beginning to recognize.

Context engineering emerged as a named discipline in mid-2025. Anthropic, LangChain, and Gartner are defining standards. But notice what’s missing from most of the conversation: the enterprise data perspective. Most context engineering content targets AI engineers building agents. Very little addresses the data engineers who build and manage the enterprise infrastructure those agents depend on.

That gap is your opportunity.

Context engineering is emerging fast. But the enterprise data infrastructure it depends on (cataloging, lineage, governance, and metadata management) is ground data engineers know well. As AI data catalogs evolve into context platforms, data engineers aren’t playing catch-up. They’re already holding the keys.

Join the DataHub community

The teams that succeed at context engineering will combine AI expertise with a deep understanding of enterprise data, and that’s a role data engineers are well positioned to play. At DataHub, we’re building a community where data engineers can learn and develop context engineering skills together. Join us!

Join our 14,000+ Community Members

FAQs

Recommended Next Reads