Part 2: How to Implement Data Mesh (Without Replacing One Bottleneck With Another)

In Part 1: What Is Data Mesh?, we covered the architecture, the principles of data mesh, and why data mesh is a critical enabler for reliable AI at scale. Let’s recap the definition:

Quick definition: Data mesh

Data mesh is a decentralized data architecture that shifts data ownership from centralized teams to domain experts. Introduced by Zhamak Dehghani in 2019, it applies principles from microservices and domain-driven design to analytical data. The approach rests on four principles: Domain ownership, data as a product, self-serve infrastructure, and federated governance.

Data mesh is not a technology you buy; the data mesh approach requires cultural transformation, process redesign, and the right enabling infrastructure.

Now we’re ready for Part 2: How to actually implement data mesh—because the real challenge isn’t grasping these principles, it’s operationalizing them. In practice, many data mesh implementations struggle not with the concepts themselves, but with operationalization.

Decentralization without the right connective infrastructure often replaces one bottleneck with another. Instead of a centralized queue, organizations end up with fragmented systems, inconsistent standards, and unclear ownership boundaries.

Solving this requires the right platform, governance model, and shared infrastructure to connect domains effectively—all areas where DataHub can help.

Are you *really* ready to implement data mesh?

Before you even commit to implementation, three questions will help determine if the timing is right.

- Are you experiencing bottlenecks managing data access across business domains?

- Do your domains have (or can they build) data engineering capacity?

- Is your organization willing to treat this as a cultural shift, not a technology project?

If the answer to all three is yes, you’re in the right position to begin. If you’re uncertain on one or two, a pilot with two to three domains (covered below) is the right way to test the model before committing organizationally.

How to implement data mesh in five phases

Data mesh implementation doesn’t require a wholesale organizational transformation on day one. The most successful approaches start focused and expand as patterns stabilize.

Phase 1: Define domains and data products

Start by defining two to three domains with clear boundaries, motivated teams, and well-understood data. Map the data products each domain will own and manage, then begin organizing existing data on your warehouse or lake around these domains.

Choose initial domains strategically. Ideal candidates have clear ownership boundaries, existing data engineering capacity (or willingness to build it), and data products that other teams actively request.

Each domain needs a data product owner. That’s someone accountable for the quality, documentation, and accessibility of that domain’s data products. This role requires both business context and technical understanding. Without it, ownership defaults to whoever happens to be closest to the data, and accountability dissolves.

Where Phase 1 breaks down: Decentralization without discovery

This is the first and most common failure mode: Domain teams begin producing data products, but there’s no unified way to find, understand, or evaluate them across domains. Discovery across all domains, with consistent metadata, search, and quality signals, is what transforms independent data products into an actual mesh. Without it, you haven’t built a mesh; you’ve rebuilt data silos with better branding.

How DataHub helps

DataHub’s Data Domains let you formally define and organize data products within each business unit, providing the structural foundation for mesh architecture from the start. Critically, this includes cross-domain discovery: every data product is searchable, browsable, and enriched with metadata from the moment it’s created—so domain independence never becomes domain isolation.

Phase 2: Build the self-serve platform layer

Before domain teams can operate independently, they need infrastructure that makes independence feasible. This is the step many teams rush past—jumping from “we’ve defined our domains” to “teams should start producing data products” without providing the tooling that makes self-serve actually work.

A dedicated self-serve data platform team should provide domain-agnostic tooling that abstracts away infrastructure complexity: Provisioning, pipeline templates, data ingestion, monitoring, access control, and data quality frameworks. The goal is to reduce the technical barrier so domain teams with reasonable skills can build and maintain data products without deep infrastructure expertise.

To be clear: Self-serve does not mean “figure it out yourself.” It means the platform is designed so that standardized templates, automated provisioning, and clear documentation handle the infrastructure complexity. Data product teams focus on their data and their domain logic—not on managing Kubernetes clusters or configuring access policies from scratch.

Where Phase 2 breaks down: Platform underinvestment

Organizations allocate budget and headcount to domain teams but underinvest in the platform that enables them. The result: every domain independently solves the same infrastructure problems, creating inconsistency, duplication, and technical debt that compounds as more domains onboard.

How DataHub helps

DataHub serves as a core component of this platform layer, providing the metadata infrastructure that domain teams rely on for discovery, lineage, quality monitoring, and governance. Rather than each domain building its own approach to these concerns, DataHub provides them as shared services that work consistently across all domains.

Phase 3: Establish data contracts

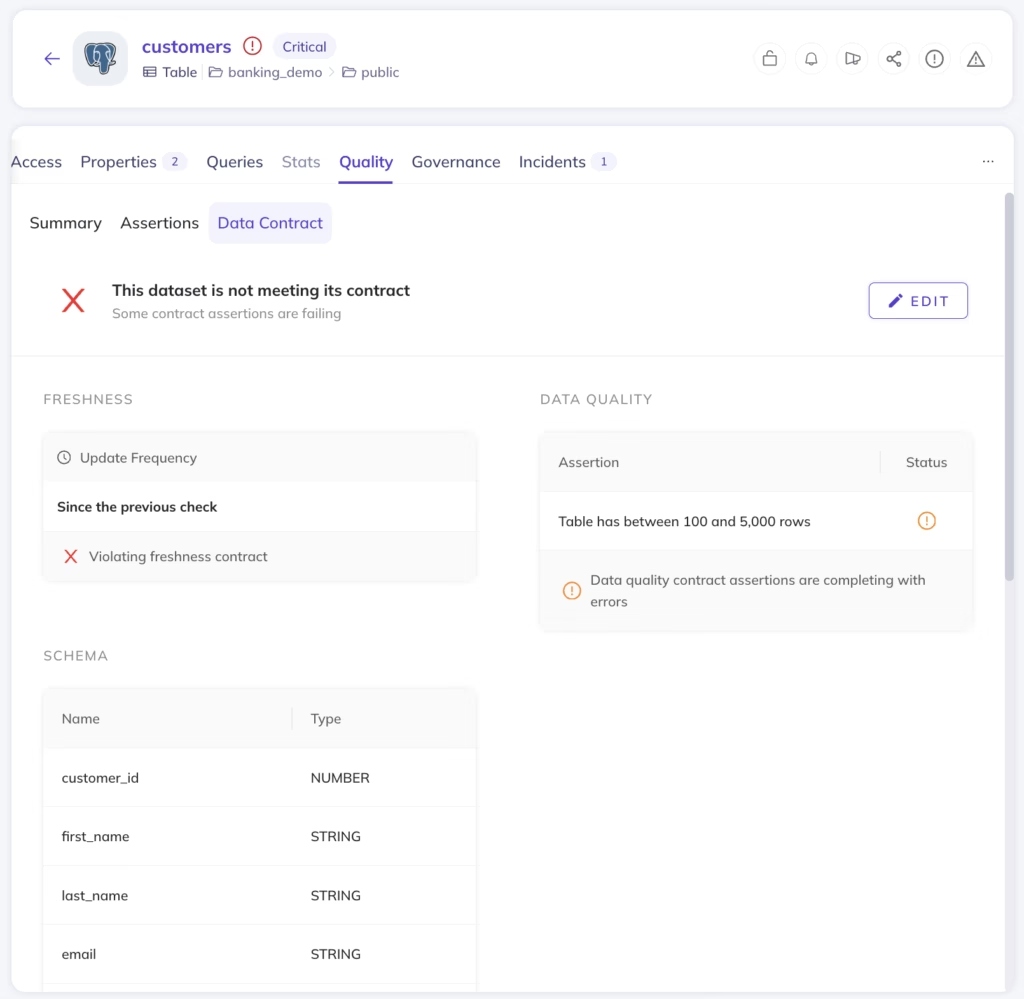

Create a clear set of expectations around what it means to be a data product owner. Data contracts codify what data consumers can depend on: Schema definitions, data quality standards, freshness SLAs, documentation requirements, and ownership accountability.

Contracts should be specific enough to be enforceable and stable enough that consumers can build on them. This isn’t just documentation—it’s the interface specification between domains, analogous to API contracts in a microservices architecture.

Where Phase 3 breaks down: Contracts without enforcement

Organizations write data contracts during the initial rollout. Standards are defined for data quality, documentation, classification, and access. And then domain teams, under delivery pressure, gradually drift from those standards because nothing enforces them in real time.

When compliance is checked quarterly (or only when an audit triggers it), the gap between stated standards and actual practice widens steadily. Contracts only work when something monitors them continuously.

How DataHub helps

DataHub’s Data Contracts establish these agreements between domains, with Assertions that monitor freshness, volume, column validity, schema, and custom SQL checks—so contract compliance is verified continuously, not just at review time.

“DataHub plays a very critical role to be the bedrock for providing the requisite governance… through its metadata management.”

– Vivek Bijlwan, Principal Product Manager, Airtel

Phase 4: Monitor and enforce data quality

Use metadata validation and data quality assertions to ensure standards are met continuously, not just during initial setup. This includes technical quality (freshness, volume, column validity), data security classifications, and compliance with organizational requirements (ownership assigned, documentation complete, classification applied).

The gap between “we have standards” and “standards are enforced” is where most implementations drift. Continuous monitoring closes that gap.

Where Phase 4 breaks down: No operational layer connecting the principles

This is the biggest architectural gap. Each data mesh principle addresses a specific concern (ownership (who), product thinking (what), self-serve infrastructure (how), governance (rules)) but without a metadata infrastructure layer connecting them, each principle operates in isolation. Domains own data but can’t make it discoverable to other domains. Self-serve tooling exists but nothing ensures data products from different domains are interoperable. The principles were designed to work as a system, and systems need connective tissue.

How DataHub helps

DataHub’s Metadata Tests monitor and enforce a central set of standards across all data assets, ensuring documentation, ownership, and classification requirements are met across every domain. This is the connective tissue: A single layer that links data discovery, quality, data lineage, and governance so the principles function as the integrated system they were designed to be.

Phase 5: Move toward federated governance

Adopt a federated computational governance model where domains manage their data products autonomously while a central team oversees governance standards, reviews compliance, and ensures organizational policies are followed.

The key: Enforcement should be automated, and monitoring should be real-time. Manual review processes, even well-intentioned ones, create the same bottlenecks that data mesh was designed to eliminate.

Where Phase 5 breaks down: Governance that lives in documents, not in systems

This is the slow-burn failure. It doesn’t surface immediately; it compounds. Data teams define data governance policies during rollout, and everything looks good for the first quarter. Then domain teams, under delivery pressure, gradually drift. Naming conventions diverge. Documentation goes stale. Quality thresholds get quietly loosened. By the time anyone notices, the inconsistency is structural, and fixing it means re-governing from scratch.

How DataHub helps

DataHub’s Remote Executor enables domain teams to validate their data products within their own network—without exposing credentials or source data externally. This maintains data sovereignty while participating in federated governance, solving one of the most practical challenges in mesh implementations: How do you enforce quality standards across domains that don’t want to expose their infrastructure?

DataHub’s own architecture reflects this principle: LinkedIn runs DataHub with federated metadata services owned and operated by different teams. These services communicate with a central search index and graph via Kafka, supporting global search and discovery while maintaining decoupled ownership of metadata. Each team manages its own metadata service; the central layer aggregates for cross-domain discovery and lineage.

DataHub’s data mesh capabilities in action: KPN

KPN, the largest telecommunications provider in the Netherlands, didn’t just implement data mesh internally—they scaled it into a public-facing Data Service Hub spanning healthcare and logistics across the EU.

The challenge was structural. KPN needed domain teams to own data products independently while maintaining the governance, quality, and discoverability standards required to share data products externally, with partners and customers operating in heavily regulated industries. Internal data mesh is hard enough. Extending it beyond organizational boundaries, where you can’t control how consuming teams operate, demands infrastructure that enforces standards automatically rather than relying on shared conventions.

DataHub provided the metadata layer that made this feasible. Cross-domain lineage tracking —understanding how data flows not just between internal teams but across organizational boundaries—was essential to maintaining trust and compliance at scale. Domain teams retained ownership and sovereignty over their data products while the platform ensured every product met the governance and quality thresholds required for external consumption.

The result is one of the largest data spaces in healthcare and logistics within the EU, supporting both KPN’s internal data program and the external Data Service Hub—all running on the same data mesh architecture, with the same governance enforcement, through a single metadata infrastructure layer.

“We track the lineage of the individual tables and the sets. And it’s great… DataHub is really good at that.”

— Stefan Driessen, Data Scientist, KPN

Discover how DataHub operationalizes data mesh

Data mesh addresses a real problem: Traditional centralized data architectures that can’t scale to meet the analytical needs of growing organizations. The four principles provide a sound framework for decentralizing data management while maintaining consistency. But the framework only works when those principles are connected by operational infrastructure.

Discovery, lineage, quality monitoring, and governance enforcement across all domains are what transform data mesh from an organizational diagram into true, functioning architecture. DataHub provides that connective layer. And it practices data mesh internally—running federated metadata services at scale, connecting decoupled domain ownership with unified global discovery. The architecture isn’t theoretical. It’s in production.

Ready to see DataHub’s data mesh capabilities in action? Check out our product demos

Join the DataHub open source community

Join our 14,000+ community members to collaborate with the data practitioners who are shaping the future of data and AI.

Explore DataHub Cloud

Take a self-guided product tour to see DataHub Cloud in action.

FAQs

Recommended Next Reads