Introducing DataHub Open Source Skills Registry

This week, we’re releasing DataHub Skills, an open source skills library for orchestrating complex data workflows with DataHub context in tools like Claude Code, Claude Cowork, Cursor, OpenAI Codex, and more — enabling automation across everything from answering data analytics questions to curating knowledge, monitoring quality, and beyond.

Your agents shouldn’t have to guess about your data

Every organization has a shared understanding of its data. What’s trustworthy, what’s sensitive, how things connect, what the business terms actually mean. In many organizations, DataHub is where that context lives — as curated descriptions, context documents, glossary terms, ownership, lineage, quality signals, usage patterns, sample queries, and more. It’s a complete record of your entire data landscape that captures not just what data exists, but what it’s for and whether you can trust it.

The problem is that by default, your AI agents can’t access any of it. They can call APIs and execute code, but without the trust signals, business definitions, and institutional knowledge your team has already captured, they’re operating blind. They guess which table to use. They make assumptions about what a column means. And they have no way to tell the difference between the canonical revenue metric and a deprecated experiment.

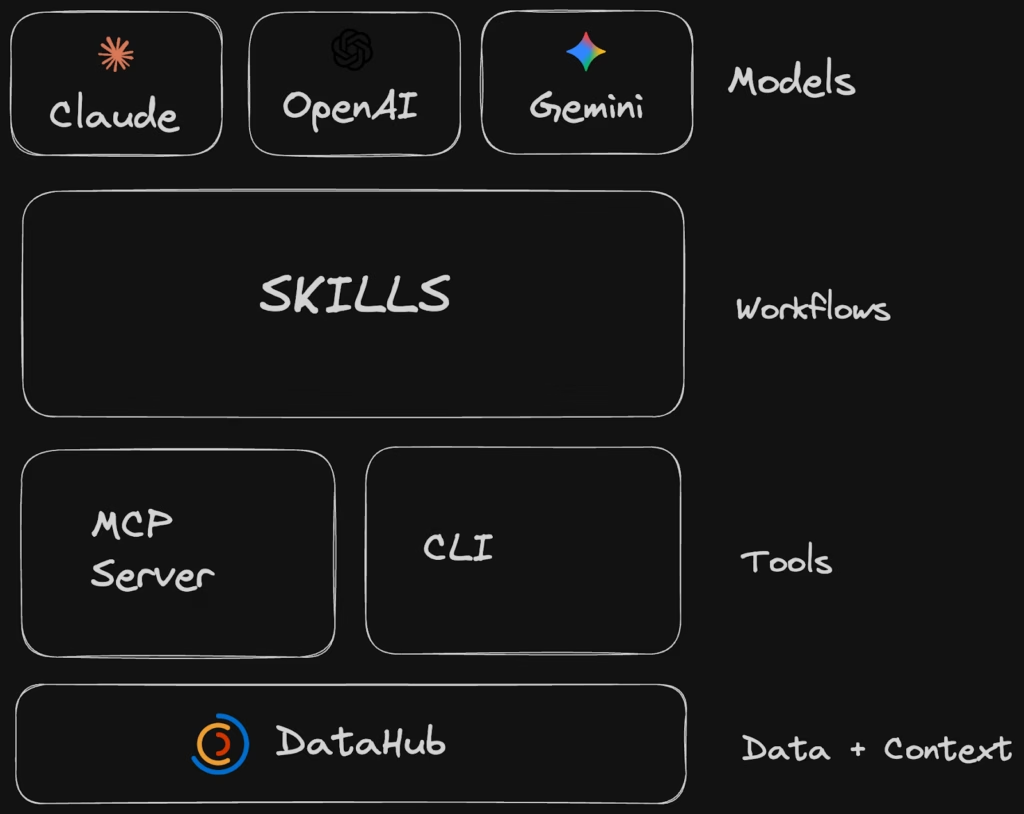

DataHub Skills help change that. Built to be compatible with DataHub’s MCP Server, CLI, and APIs, they give agents a direct line into the context your organization has already built, so agents can search, reason, and act with the same understanding as your best data analyst.

Tools vs. instructions: Why skills matter

If you’ve been following the agent ecosystem, you’ve probably heard about MCP. MCP provides agents with access to tools, which enable it to fetch information and perform actions autonomously or on behalf of a user.

Skills, on the other hand, provide high-level recipes and guides that help agents to effectively use tools. They serve as the bridge between complex multi-step workflows and raw capabilities that help ensure an agent performs a task reliably and consistently.

MCP gives agents tools. The ability to perform discrete actions: search a catalog, read a schema, tag a column. Necessary, but not sufficient! Having every tool in the shed doesn’t mean you know how to build a house.

Skills give agents instructions. The knowledge of how to combine those tools into real workflows, in the right order, with the right judgment at each step. In the case of DataHub, how to set up data quality monitoring, or how to create a business glossary.

MCP provides capabilities. Skills provide domain-specific knowledge. You need both!

What we shipped

We’re launching the registry with five skills to help you work with DataHub:

- datahub-setup — Establish a connection to your DataHub instance (OSS or Cloud). Configures authentication and connectivity so agents can start working immediately.

- datahub-search — Explore data assets, business definitions, documentation, and more. This is the skill that lets agents find trustworthy data the same way your best analyst would: by leveraging descriptions, glossary terms, ownership, usage stats, and data quality signals — not just table names.

- datahub-lineage — Understand the origins and downstream consumers of data assets and columns. Agents can trace how data flows through your stack, identify impact radius for changes, and understand dependencies before taking action.

- datahub-enrich — Create glossaries, domains, and data products. Add descriptions, tags, glossary terms, owners, and structured properties to assets. This is the skill that turns agents into data stewards — able to curate metadata at a scale no human team can match.

- datahub-quality — Find and report on unhealthy assets, perform root cause analysis, and manage data quality incidents. For DataHub Cloud customers, agents can also create freshness, volume, and column-level data quality checks on your most important tables and columns.

Each skill knows its scope and hands off cleanly to the next. A search can flow into a lineage trace, which can surface a quality issue, which can trigger enrichment, enabling you to model complex workflows across data analytics, governance, and observability.

Building agents with skills

Skills are building blocks, providing instructions about how to perform complex workflows. When combined, they enable entirely new classes of workflows, which can be combined into agents.

Some agents that DataHub skills can help you build include:

Data Analytics Agent (Text-to-SQL)

Build an agent that answers natural language business questions. The agent uses datahub-search to find and understand trustworthy data – leveraging descriptions, glossary terms, sample queries, usage stats, and quality signals – then hands off to a data warehouse tool or skill (Snowflake, BigQuery, Databricks) to execute the generated SQL.

Data Quality Agent

Build an agent that autonomously provisions data quality checks and reports on the health of your data estate. It uses datahub-search to find important tables by usage, datahub-quality to add assertions and monitor incidents, and generates daily health reports segmented by domain or owning team. No more manually configuring checks one table at a time.

Data Steward Agent

Build an agent that applies descriptions and compliance-related glossary terms to tables and columns, then reports on coverage. It uses datahub-search to find target assets by platform, domain, or ownership, cross-references schema metadata against your glossary with datahub-enrich, and applies terms and descriptions. Then it generates reports on where sensitive data exists and where PII appears across the organization.

How to get started

Installation

DataHub Skills work with Claude Code, Cursor, OpenAI Codex, GitHub Copilot, Gemini CLI, Windsurf, and other Agent Skills-compatible tools.

The quickest path is the Skills CLI, which auto-detects your installed agent and sets things up:

npx skills add datahub-project/datahub-skillsOr install for a specific platform:

bash

# Claude Code

claude plugins install datahub-skills --from github:datahub-project/datahub-skills

# Cursor

npx skills add datahub-project/datahub-skills -a cursor

# GitHub Copilot

npx skills add datahub-project/datahub-skills -a github-copilot

# OpenAI Codex

npx skills add datahub-project/datahub-skills -a codex

# Gemini CLI

npx skills add datahub-project/datahub-skills -a gemini-cli

# Windsurf

npx skills add datahub-project/datahub-skills -a windsurfFull installation instructions and manual setup options are in the repository README.

Go build something

The repo is live at github.com/datahub-project/datahub-skills. Install the skills, connect them to your DataHub instance, and ask a question about your data.

DataHub has always grown the way open-source projects grow best — through practitioners who hit real problems and built their way out of them. That community is 14,000+ strong in Slack today, and building in the open is what made that possible.

The five skills we’re shipping cover the most common workflows, but the registry is meant to grow. If you’re already in the DataHub community, you know where to find us. If you’re not, join the DataHub community and start building.

Your agents have been operating without the context your team spent years building. Now they don’t have to.

Future-proof your data catalog

DataHub transforms enterprise metadata management with AI-powered discovery, intelligent observability, and automated governance.

Explore DataHub Cloud

Take a self-guided product tour to see DataHub Cloud in action.

Join the DataHub open source community

Join our 14,000+ community members to collaborate with the data practitioners who are shaping the future of data and AI.

Recommended Next Reads