DataHub: The Semantic Backbone of Enterprise Data Analytics Agents

What Pinterest’s data analytics agent reveals about the context layer every AI agent needs

Last week, the Pinterest engineering team published an incredibly thorough deep dive about how they built the most widely adopted AI agent at their company — an analytics agent that helps analysts discover tables, find reusable queries, and generate validated SQL from natural language. It now sees 10x the usage of any other internal tool at Pinterest.

The thesis of their post is clear: at enterprise scale — 100,000+ analytical tables, 2,500+ analysts and dozens of domains — raw technical metadata isn’t enough. You need rich semantic context: what tables mean, how they relate, which ones are trustworthy, how metrics are actually calculated. And crucially, that context isn’t something you have to create from scratch. It already exists as latent signal in the exhaust of real data analysts: the joins, filters, aggregations, queries that they are already using to answer mission-critical business questions.

Pinterest’s system captures, extracts, and makes these signals accessible to their agent, creating a self-reinforcing loop where every analyst query enriches the knowledge base that helps the next analyst (or agent) do better work.

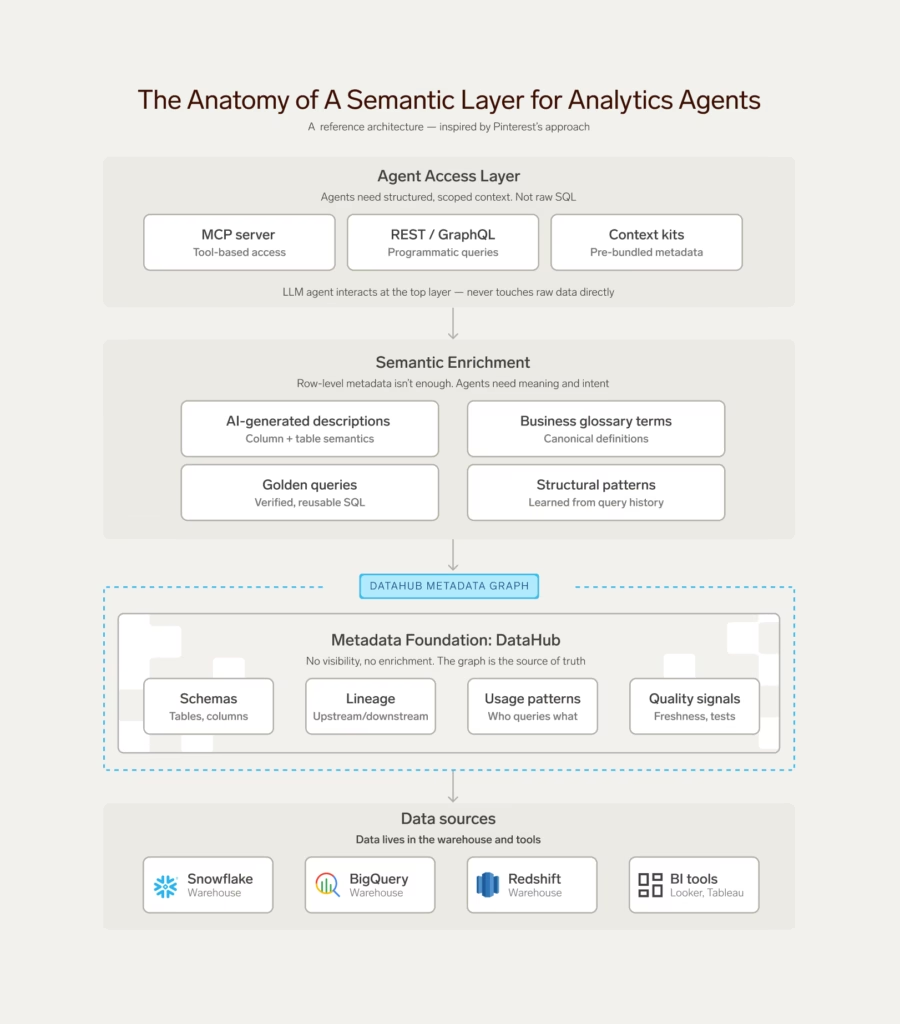

What’s particularly relevant is the foundation powering all of it: the catalog. In Pinterest’s architecture, DataHub serves as the central store for semantic context: table governance, ownership, column-level semantics via glossary terms, and the metadata that feeds everything from table discovery to AI-generated documentation. Their post is explicit: this foundation of semantic context within DataHub “laid the groundwork for everything that followed.”

We’ve seen the same pattern emerging across multiple organizations that we work closely with. The teams that are making real progress towards data democratization are doing so through analytics agents that start with the semantics — a structured understanding of what your data means, not just where it lives or how it’s formatted. Increasingly, we are seeing organizations start with DataHub.

This post unpacks why that matters, what Pinterest’s approach teaches us about building agents that deliver trustworthy results, and how DataHub can provide a launchpoint for every organization to replicate their success as analytics agents move from demos to production.

The context problem

Imagine you’ve just hired a brilliant new analyst to the data team at your e-commerce company. During their first week, the customer success team reaches out asking for help “understanding how customer retention is tracking for the cohort onboarded in June.”

Your new analyst isn’t going to have the answer on day one. They’ll need time to get their bearings by chatting with coworkers, reading old Slack threads, reverse-engineering queries from the team’s Looker Explores. They’ll gradually build a mental model of which tables are trustworthy, which columns mean what, how things join together, and what “retention” even means in this context. Ask any data team manager whether this kind of ramp-up is unusual, and they’ll all tell you no. Context gathering is a fundamental prerequisite to correctly interpreting the enterprise’s data.

The story with AI agents is really no different. If you want any chance of accurately answering mission-critical business questions, your agent needs access to the same institutional context your analyst collected during those first weeks on the job. The key challenge we face in deploying analytics agents to production at enterprises is largely about how to artificially emulate the same type of environment; how to provide an agent that starts with an empty context window access to tools to progressively learn about relevant parts of the business and its data.

Emerging industry benchmarks are beginning to demonstrate the importance of domain-specific context to the success of agentic analytical workflows, like converting natural language text into valid SQL. The BIRD benchmark was designed in part to measure exactly this: what happens when you give models semantic context like business definitions, value descriptions, and domain terminology alongside the raw schema? One study found that adding this type of context improved accuracy by up to 20 percentage points over raw schema alone. Today, 29 of the top 30 submissions on the BIRD leaderboard depend on semantic evidence to achieve their high scores. Not better models, just better context.

Lessons from Pinterest’s analytics agent

Pinterest’s blog post is one of the best public accounts of production analytics agent architecture we’ve seen to date. I’d encourage you to read the full thing, but here are the unique architectural choices and insights that stood out most to us:

They used AI to document their data at scale. Pinterest generated table and column descriptions automatically using lineage, existing docs, glossary terms, and example queries. This cut manual documentation effort by about 40%, with humans staying in the loop for the most critical assets. It’s a pretty shrewd tradeoff: your top-tier tables get expert curation, but for the thousands of others that would otherwise sit undocumented, an AI-generated description can be the difference between an agent that can find and use the table effectively and one that can’t.

They built a shared vocabulary. Using Glossary terms, Pinterest standardized business concepts like Advertiser Id to give them a common language used to link columns representing important business concepts across tables. What’s worth noting is how they scaled this: they analyzed join patterns in query logs, then propagated glossary terms automatically to cover >40% of their columns without manual tagging.

They turned query history into semantic insight. This is the part of Pinterest’s post that stood out most. Using an LLM, the team reverse-mapped each query into a semantic description of the business question it was designed to answer, then made that searchable. The result is that the system is more likely to identify a query that answers your exact business question based on queries that have been run by others. This creates a self-reinforcing positive feedback loop: every time an analyst runs a query, they are making the data analyst agent smarter.

What’s special about Pinterest’s analytics agent is it gets better the more it is used, which may be why it quickly became the most-used AI agent at the company, with 10x the usage of the rest. Most organizations don’t have the resources to build all of this from scratch. But the architectural patterns and primitives — semantic documentation, shared vocabularies, query-derived insights, metadata-aware ranking — are exactly the foundations that DataHub provides.

DataHub: The semantic backbone for your analytics agent

Pinterest spent years building the semantic infrastructure behind their agent — dedicated teams for governance, documentation, vector search, and agent integration. A multi-year cleanup that took their warehouse from 400K tables down to 100K. That investment paid off, but it’s not a path most organizations can realistically follow.

The architectural patterns they validated, though, are replicable. And most of them map directly to capabilities that DataHub Cloud provides to help you get started.

DataHub mines query insights from your warehouse automatically. By ingesting query history and usage data from Snowflake, Databricks, BigQuery, and Redshift, DataHub builds an understanding of popular reference queries, join patterns, filter conditions, and aggregation logic. You can also curate golden queries — validated, annotated SQL that serves as canonical ground truth for how to query your most important assets. This is the same type of analyst-derived signal that Pinterest spent years extracting and indexing.

DataHub generates semantic descriptions for your data assets. AI Documentation produces descriptions for tables, columns, and queries using lineage, profiling statistics, sample values, and related metadata. Machines generate the starting point, humans review and refine over time. Custom instructions ensure the output matches your organization’s terminology and standards.

DataHub gives you semantic search across your entire data landscape. Tables, columns, dashboards, glossary terms, knowledge documents — all searchable, with ranking informed by popularity, freshness, and governance metadata completeness. The answer to “how do we measure retention?” might live in a Looker dashboard, a glossary definition, or a golden SQL query. DataHub treats all of these as first-class searchable entities, so your agent has access to the same rich context that a seasoned analyst develops over years.

DataHub lets you classify, organize, and monitor your data so your agent can separate signal from noise. Domains align assets to teams and business units. Business Glossary terms create a shared vocabulary that resolves ambiguity across tables. Assertions and incidents give your agent visibility into data quality health. Whether you’re tiering your tables, implementing a medallion architecture, or simply flagging which assets are production-ready, this is how you give your agent the governance awareness that Pinterest describes as foundational.

And these capabilities compound over time. As your team documents assets, attaches glossary terms, curates golden queries, and defines data quality assertions, the agent is more likely to get it right. Agent-authored queries in turn begin to feed back into the system as reference points for future questions. The result is a shared, curated knowledge base that your agent can draw on to accurately answer your organization’s most critical business questions.

Start building

All of this context is only useful if your agent can easily access it. DataHub provides two key components that make the semantic layer available wherever you’re building:

The DataHub MCP Server exposes the semantic layer through a hosted Model Context Protocol server included with every instance of DataHub Cloud. If you’re building with Claude, ChatGPT, or any MCP-compatible framework, DataHub’s tools for searching across all of your data, getting reference queries, and more are instantly available as a native tool call.

The Agent Context Kit provides integration guides for specific frameworks: LangChain, Snowflake Intelligence, Google ADK, Vertex AI, and Microsoft Copilot Studio. Wherever you’re building agents, DataHub’s semantic context is a few lines of integration away. Pinterest’s blog is proof that this approach works at scale. DataHub’s goal is to make the same foundation accessible to every organization, so that following in Pinterest’s footsteps in building a reliable data analytics agent is possible within weeks, not years.

Get started with the DataHub MCP Server, Agent Context Kit, and AI Documentation. Or join us on Slack— we’re always happy to talk about what you’re building.

Recommended Next Reads