Accelerating Connector Development with AI Skills

February 2026 Town Hall Highlights

February 2026 Town Hall Highlights

Building custom metadata connectors manually is time-intensive and difficult to scale.

At our February Town Hall, we explored how teams are accelerating connector development with DataHub Skills, an AI-assisted development toolkit. We also discussed how DataHub is evolving as the context layer for modern data systems.

The session featured a live demo of DataHub Skills generating connectors in just a few hours, a look at Foursquare’s geospatial platform using DataHub as its discovery engine, and a forward-looking roadmap across the AI, Discover, Observe, and Govern pillars.

This recap highlights the key demos, architectural insights, and roadmap updates from the session.

Accelerate connector development with DataHub Skills

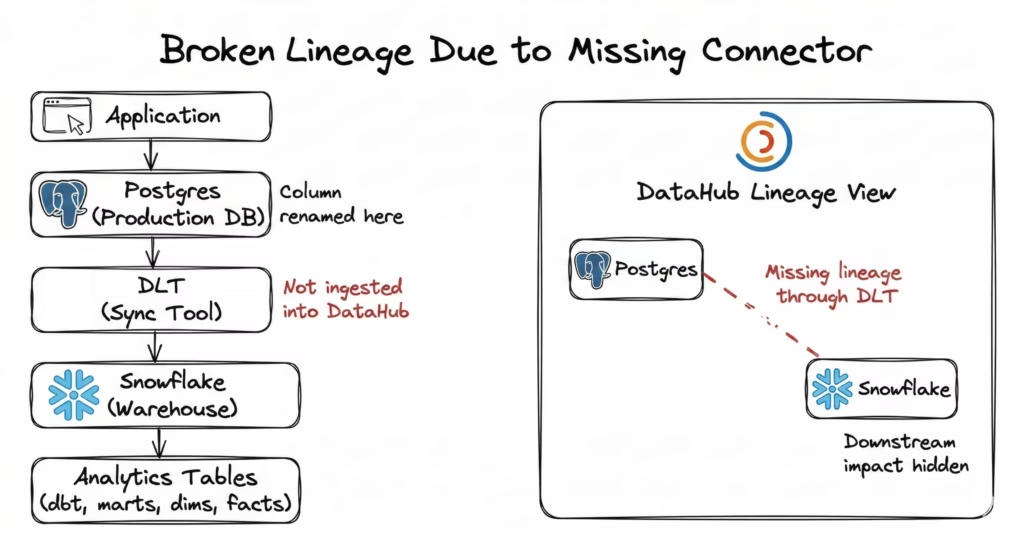

Modern data stacks evolve quickly, introducing new ingestion tools faster than connectors can be built. When a system is not supported by an ingestion connector, lineage gaps appear.

For example, if your team uses dlt, an open-source data loading library, to move data from Postgres to Snowflake without a supported connector, DataHub cannot observe that synchronization layer in its lineage graph. As a result, downstream dependencies remain hidden.

Without full lineage visibility, a developer might rename a column in Postgres without realizing that it feeds Snowflake tables and dbt models.

The issue is not just broken dashboards. It is missing context across the data pipeline.

Historically, building a new ingestion source required significant manual effort. Developers had to:

- Research the target system’s APIs

- Understand the DataHub metadata model

- Scaffold code from scattered examples

- Wait through multiple review cycles

That approach does not scale with the pace of modern tooling. To address this challenge, we introduced DataHub Skills, an AI-assisted development toolkit designed to reduce connector development friction. These skills, available on skills.sh, integrate with AI coding assistants such as Claude Code and Gemini to automate research, planning, and quality checks.

“DataHub is only as powerful as the metadata that you bring into it. As new tools emerge, lineage gaps grow. These AI skills help teams keep up.”

— Maggie Hays, Founding Product Manager, DataHub

How do DataHub Skills work together?

Connector development typically follows three stages: understanding standards, planning the architecture, and validating the implementation.

DataHub Skills formalize each stage into a reusable workflow.

| Skill | Core function | Practical benefit |

| load_standards | Applies the same coding and testing standards used by DataHub maintainers during review. | Aligns connectors with production requirements before implementation begins. |

| datahub-connector-planning | Analyzes the target system, maps entities to the DataHub metadata model, and generates a structured development plan. | Reduces manual documentation review and architectural analysis. |

| datahub-connector-pr-review | Validates structure, testing coverage, and conventions before a pull request is opened. | Reduces back-and-forth review cycles prior to submission |

Instead of relying on informal guidance or reverse-engineering existing connectors, developers start with explicit standards, produce a structured plan, and validate changes before opening a pull request.

This shifts the workflow from trial and error to guided implementation.

Lineage in practice: Generating a dlt connector

In the live demo, DataHub Founding Product Manager, Maggie Hays, walked through how to use DataHub Skills to generate a connector for dlt.

This segment focuses on the planning workflow and the artifacts the skill generated.

How does connector ingestion change the development workflow?

Before ingestion, the Postgres dataset showed no downstream lineage. The graph appeared isolated.

After ingestion:

- A one-hop lineage view connecting Postgres to Snowflake

- dbt models visible downstream

- Column-level lineage exposing field-level dependencies

If a developer renames the “white rating” column, DataHub immediately surfaces affected downstream entities.

The improvement is not just faster connector development. DataHub also makes downstream dependencies visible across the full pipeline.

Together, these DataHub Skills shift connector development from an ad hoc process to a structured, review-ready workflow. For teams expanding their data stack, this reduces friction while improving metadata completeness.

Availability and community contribution

The DataHub GitHub repository and skills.sh host the DataHub Skills toolkit.

To make things even more exciting, we’ll send a DataHub swag box to:

- The first 10 community members who successfully merge a pull request using these tools

- The first 10 contributors who help review connectors using the skills

Community participation expands connector coverage and strengthens lineage visibility across the ecosystem.

Foursquare spotlight: Democratizing geospatial intelligence

Geospatial data is notoriously difficult to unify across sources. Datasets often use incompatible projections, inconsistent schemas, and require specialized tooling.

Foursquare aggregates and indexes more than 50 public datasets into a universal H3 grid system known as the Spatial H3 Hub.

To make these indexed datasets discoverable and securely accessible, they rely on DataHub as the discovery engine and governed context layer behind the H3 Hub.

“DataHub basically acts as the discovery engine for the H3 Hub.”

— Shashir Ambasta, Tech Lead, Geospatial Intelligence Platform, Foursquare

Through its Iceberg REST Catalog, DataHub provides:

- A unified metadata layer for H3-indexed datasets

- A discovery and catalog layer for datasets managed as Apache Iceberg tables

- Policy-based access control for secure dataset access

- A GraphQL API that powers dataset discovery for internal applications

Instead of querying storage systems directly, users and services interact with DataHub to discover, filter, and access datasets across the entire H3 Hub.

This metadata foundation also powers Foursquare’s Spatial Agent, which retrieves relevant datasets through DataHub and generates SQL queries and visualizations in response to natural-language questions.

DataHub serves as the connective layer that enables dataset discovery, governance, and programmatic access across Foursquare’s geospatial platform.

Stay tuned for a dedicated deep dive into Foursquare’s architecture in an upcoming post.

DataHub product roadmap 2026: AI, Discover, Observe, and Govern

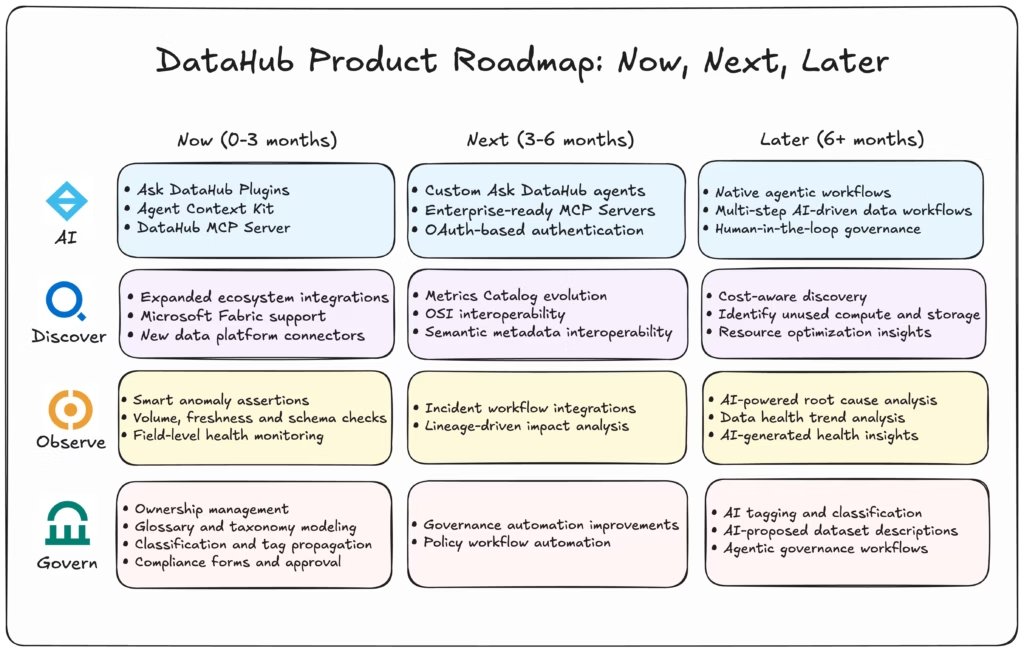

The DataHub roadmap focuses on four pillars: AI, Discover, Observe, and Govern.

James Mayfield, VP of Product at DataHub, framed the roadmap as a shift from documenting the enterprise data landscape to actively operating it. It moves DataHub from a human-centric metadata map to a context platform that both humans and AI agents navigate.

The roadmap is structured across three horizons: now (0–3 months), next (3–6 months), and later (6+ months).

AI and context: From human productivity to agent-driven workflows

DataHub’s AI vision centers on enterprise context.

Teams use DataHub to find trustworthy data, understand lineage, define data quality checks, and monitor compliance policies.

“Tomorrow, the big bet that we’re making is that both humans and agents will be able to leverage DataHub.”

— James Mayfield, VP of Product, DataHub

Now: Making enterprise context accessible to humans and AI tools

Today, DataHub exposes structured enterprise context to both users and external systems. It acts as a programmable context layer built on the metadata graph.

DataHub provides structured enterprise context through three mechanisms:

- Ask DataHub Plugins: Extend Ask DataHub with MCP-based integrations that retrieve context and trigger actions across external systems such as GitHub, dbt, and Snowflake.

- Agent Context Kit: A toolkit for building AI agents that access DataHub’s metadata graph and enterprise context from platforms like LangChain, Snowflake Intelligence, or Crew.ai.

- DataHub MCP Server: Provide secure, programmatic access to DataHub’s metadata graph through the Model Context Protocol (MCP), enabling AI tools and agents to retrieve structured enterprise context.

This enables AI systems to retrieve structured enterprise metadata across tables, lineage, dashboards, and documentation.

Next: Enterprise-ready agent infrastructure

The next phase focuses on production readiness, secure access, and deeper cross-tool workflows.

- Custom Ask DataHub agents

- Enterprise-ready MCP servers

- OAuth-based user authentication

- Broader MCP-compatible tool support

This stage strengthens governance and security while enabling richer cross-system AI workflows.

Later: Native agentic workflows inside DataHub

Over time, the roadmap moves from exposing context to orchestrating workflows directly within DataHub.

Future capabilities include:

- Native agentic workflows inside DataHub

- Multi-step AI-driven data workflows

- Human-in-the-loop governance automation

This shift moves DataHub from documenting the data landscape to actively operating it through governed, AI-driven workflows grounded in enterprise context.

Discover: From trusted data search to full supply chain visibility

DataHub enables teams to discover and understand trustworthy data across the enterprise.

Today, DataHub enables teams to:

- Search across datasets, dashboards, pipelines, and transformations

- Trace asset-level and column-level lineage across platforms

- Manage ownership, documentation, classification, and governance metadata

- View usage statistics and update history to evaluate data reliability

Together, these capabilities form the ground truth for understanding how data moves through the enterprise.

Lineage, usage signals, and governance metadata allow teams to assess impact, trace dependencies, and make informed decisions.

The next phase expands discovery beyond human workflows. Both humans and agents will need to navigate the full enterprise data supply chain, from upstream operational tables to downstream dashboards, metrics, and models.

Now: Expanding ecosystem coverage and semantic interoperability

Strong discovery depends on the breadth of ingestion. The more systems connected, the more complete the lineage graph.

DataHub is expanding integration coverage across:

- Microsoft Fabric ecosystem, including Data Factory and OneLake

- Continued support and expansion across Azure services

- Connectors for Dataplex, Flink, Monte Carlo, and Informatica

Next: Metrics catalog and Open Semantic Interchange (OSI)

A major focus within discovery is the evolution of the Metrics Catalog and participation in Snowflake’s Open Semantic Interchange (OSI).

The goal is to:

- Define canonical metrics and semantic models

- Support two-way metadata synchronization

- Connect to metric definitions across Snowflake, dbt, Tableau, and other tools

- Enrich metrics with semantic descriptions and AI-friendly context

By standardizing semantic metadata, DataHub enables both humans and agents to reason about metrics, dimensions, time rollups, and definitions consistently across platforms.

This strengthens discovery at the semantic layer and not just at the dataset level.

Later: Cost-aware discovery powered by lineage

DataHub is making discovery operational.

With a complete end-to-end lineage view, DataHub can identify:

- Compute resources that are not consumed downstream

- Storage that is rarely queried

- Transformation pipelines generating low-value outputs

The roadmap includes surfacing cost-saving opportunities directly from lineage insights, helping teams take action on assets that consume resources without delivering value.

Observe: From monitoring to AI-powered resolution

Observability at DataHub is evolving from real-time monitoring toward AI-assisted resolution and prevention.

Today, teams rely on DataHub to detect data issues and manage incidents through human-driven workflows.

Over time, observability becomes more adaptive, automatically prioritizing critical datasets, assisting with root cause analysis, and preventing degraded data from reaching production systems or AI agents.

Now: Strengthening anomaly detection with smart assertions

The immediate focus is improving detection across the data landscape.

DataHub Cloud is enhancing:

- Smart assertions for anomaly detection

- Monitoring of volume, freshness, and schema changes

- Field-level health monitoring for key datasets

These capabilities help teams spot unexpected changes before they impact production systems.

Detection remains the foundation of effective observability.

Later: AI-assisted resolution and health intelligence

Once detection is mature, the focus shifts toward resolution and prevention.

Incident management uplifts

DataHub is enhancing resolution workflows through:

- Integrations with Jira, PagerDuty, and other incident systems

- Lineage-driven impact analysis to identify upstream and downstream dependencies

- AI-driven root cause analysis with proposed remediation

- Customizable downstream notifications and soft banners on impacted assets

The goal is to reduce mean time to resolution (MTTR) by connecting anomalies directly to impact and ownership.

Data health trends and AI-generated insights

Beyond incident response, observability becomes proactive.

Upcoming enhancements include:

- Interactive data health dashboards

- Trend analysis across assertions and incidents

- AI-generated insights surfaced directly within health views

- Drill-down capabilities to investigate patterns over time

This enables organizations to move from reactive monitoring to continuous improvement of data quality.

“We want to leverage AI to put monitors on the most important datasets at your organization.”

— James Mayfield, VP of Product, DataHub

Over time, observability moves beyond alerting humans. It automatically identifies critical assets, learns normal patterns, and prevents degraded data from reaching downstream consumers or AI agents.

Observability shifts from detecting incidents to actively protecting the data supply chain.

Govern: From shift-left controls to AI-assisted governance

Governance is a critical pillar of the DataHub platform.

It is a central part of how many prospects, customers, and open-source community members engage with DataHub, particularly around ownership, policy enforcement, and compliance.

Now: Operationalizing shift-left governance

Today, DataHub provides structured governance capabilities across the enterprise data ecosystem, including:

- Ownership management and accountability tracking

- Glossary terms and taxonomy modeling

- Classification workflows and tag propagation

- Compliance forms and approval workflows

- Metadata tests to monitor and enforce governance standards

- Bi-directional synchronization with Snowflake, Databricks, and BigQuery

Together, these capabilities create a strong governance foundation.

DataHub embeds policies, definitions, and compliance signals directly into the metadata lifecycle.

This shift-left approach improves audit readiness and strengthens compliance posture over time.

Later: Reducing governance toil with AI assistance

The next phase focuses on reducing the cost and effort of maintaining strong governance at scale.

Planned capabilities include:

- AI-proposed data descriptions

- AI-suggested classifications and tagging

- Automated identification of critical data elements for regulatory review

- Agentic workflows to coordinate complex, multi-persona governance processes

This includes supporting audit preparation and regulatory frameworks such as GDPR and SOX compliance.

Rather than replacing human oversight, these capabilities aim to reduce repetitive manual effort while preserving accountability and control.

Governance evolves from policy management to intelligent automation, helping organizations maintain compliance while scaling their data ecosystem.

Join the DataHub community shaping the future of data context

DataHub is built for long-term collaboration with the community. The community drives our roadmap through feedback, pull requests, and the real-world production challenges you share during these town halls.

Three ways to get started today:

- Watch the full town hall recording to see our AI ingestion demo and Foursquare’s showcase in action.

- Join the DataHub Slack community to connect with over 14,000 data practitioners and contribute to new open-source connectors in the #contribute-code channel.

- Explore DataHub Skills on Skills.sh and start building custom integrations for your data stack.

DataHub’s direction is shaped by the practitioners who use it every day. If you’re building with metadata, AI, and governed data systems, your input matters.

Recommended Next Reads